DeepSeek released an optical character recognition model last week that extracts text from images and turns it into machine-readable words. The paper and early reviews report that the model performs on par with top systems on key benchmarks for optical character recognition. DeepSeek presents the model primarily as a test bed for a different approach to storing and retrieving information inside Artificial Intelligence systems.

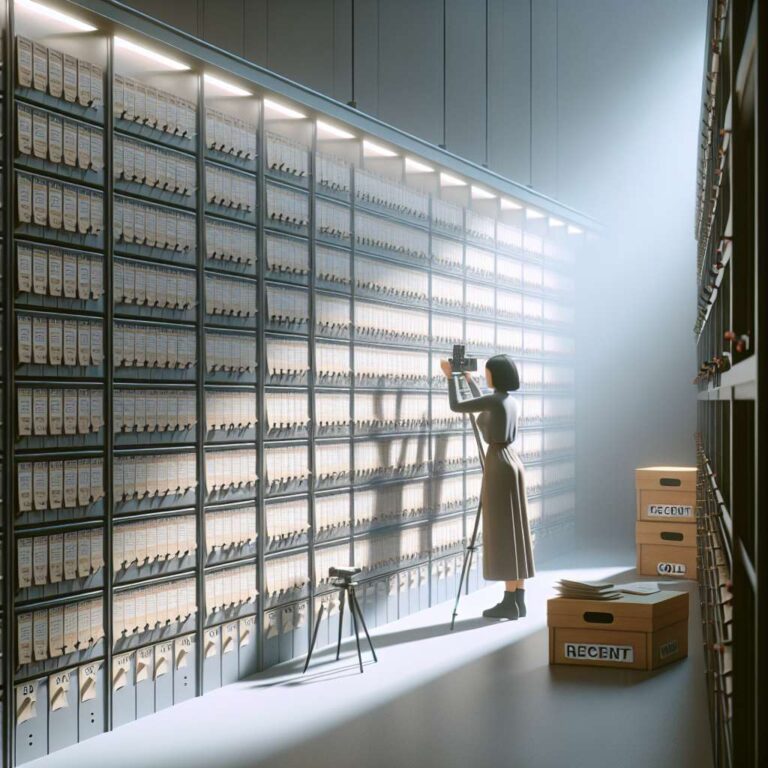

The paper’s central innovation is replacing large sets of text tokens with image-based tokens and a tiered compression scheme. Instead of representing words as thousands of discrete tokens, the system packs written information into image form, similar to photographing pages of a book, and stores older or less critical content in progressively blurrier representations. The company argues this lets models retain nearly the same information using far fewer tokens, which could cut the computing resources required to run long conversations and reduce the carbon footprint associated with Artificial Intelligence memory. The paper frames the method as a possible remedy for so-called context rot, where long interactions cause models to forget or muddle earlier information.

Researchers have already noted the approach’s promise while cautioning that it is an early exploration. Andrej Karpathy praised the paper on X, suggesting images may be better than text as inputs for large language models and criticizing text tokens as wasteful. Manling Li of Northwestern University called the work a new framework for addressing memory challenges, and Zihan Wang highlighted its potential to help continuous conversations remember more effectively. DeepSeek also reports that the system can generate more than 200,000 pages of training data a day on a single GPU. Based in Hangzhou, DeepSeek previously surprised the industry with DeepSeek-R1 earlier this year, and the new paper continues its push into low-resource research directions while noting more work is needed to make memory recall more dynamic and importance-aware.