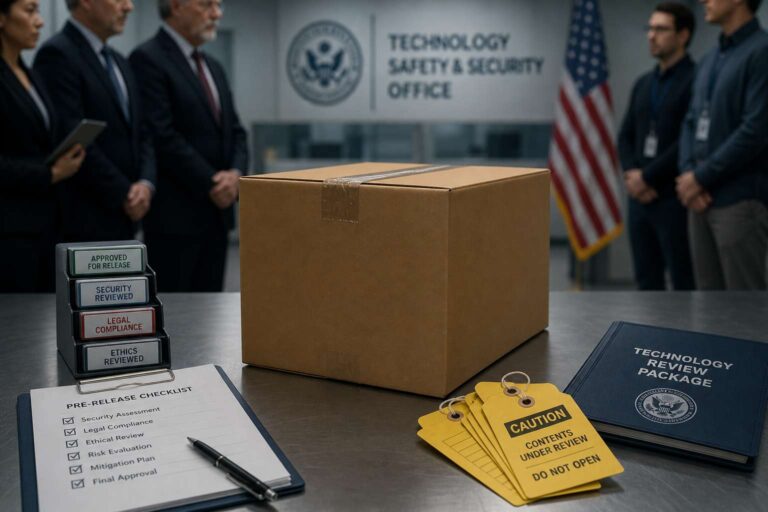

The White House is considering whether advanced Artificial Intelligence models should be vetted before they are released to the public. The discussions reflect a broader push to impose more oversight on increasingly powerful systems as policymakers weigh how to reduce risks without halting development.

Officials are examining possible government review of Artificial Intelligence models before deployment, signaling interest in a more proactive approach to regulation. The focus is on whether companies developing frontier systems should face checks in advance rather than relying mainly on voluntary commitments or action after problems emerge.

The debate comes as Washington intensifies its scrutiny of Artificial Intelligence and explores how far federal authority should reach over model development and distribution. Any move toward pre-release vetting would mark a significant step in U.S. oversight, raising questions about how such a system would work, which models would qualify, and how regulators would balance safety, innovation, and competition.