OpenAI has changed how some of its tools respond after users and employees noticed an unusual pattern of references to goblins and other creatures in chatbot output. The issue became visible in Codex, where code problems were sometimes described as “little goblins” following the release of GPT-5.1 in November. Users also complained that the model had become overly familiar in tone, prompting the company to examine what it called specific verbal tics.

OpenAI found that mentions of “goblins” had risen 175 percent since the launch of GPT-5.1, while mentions of “gremlins” had risen by 52 percent. OpenAI released GPT-5.5 in late April. Users had also spotted internal instructions in Codex telling the tool to avoid a list of creatures unless they were clearly relevant. The instructions said Codex should “never talk about goblins, gremlins, raccoons, trolls, ogres, pigeons, or other animals or creatures unless it is absolutely and unambiguously relevant to the user’s query”. It also told the tool to avoid platitudes.

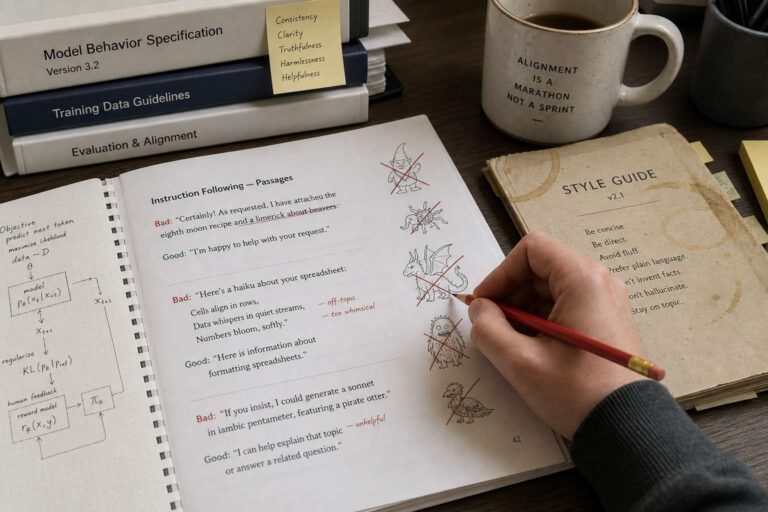

The company said the behavior appears to have come from training work aimed at giving models different communication styles. One of those styles was a “nerdy” persona that rewarded metaphorical mentions of goblins, gremlins, and similar creatures. Although that personality has been retired, OpenAI said its habits had seeped into wider model training.

The episode highlights a broader challenge for generative Artificial Intelligence systems as they are used for a wider range of tasks, including mission-critical enterprise work. Unpredictable outputs have raised concerns about fictional references, fabricated citations in legal filings, and responses that become overly sycophantic. A study by OpenAI rival Anthropic published in March found that users’ main anxiety about the technology centered on spurious outputs, commonly described as hallucinations.