Distillation is described as a foundational and widely used technique in Artificial Intelligence development, especially for post-training smaller, cheaper, or specialized models from stronger ones. The central concern is that recent language around so-called distillation attacks risks attaching criminal or abusive connotations to a standard practice that is broadly used across research and commercial development. The more precise problem is not distillation itself, but the use of tactics such as jailbreaking, hacking, identity spoofing, or extracting information that model providers did not intend to expose through their APIs.

Distillation is presented as a broad category that can include synthetic data generation, preference data creation, verification for reinforcement learning, and transferring narrow skills such as math or coding from stronger models to weaker ones. Modern LLM processes could look like using a GPT API to build an initial batch of synthetic data to build a specialized small data-processing model. That intermediate model can then be used to generate larger datasets, which may later support training another model from scratch. In such workflows, the boundary of what counts as being distilled from a closed model becomes difficult to define clearly. Use of closed APIs for this purpose has long existed in a grey area under platform terms that generally forbid creating competing language model products, but enforcement has been limited.

The piece argues that many companies and research groups have likely engaged in some form of distillation from major closed models, and that the practice spans open and closed ecosystems alike. Examples cited include Nvidia’s latest Nemotron models being distilled in large part from Chinese, open-weight models, and Ai2’s Olmo models being distilled from a mix of open and closed models. Testimony in the OpenAI and xAI dispute is also used to illustrate how common the practice is across major labs. The misuse drawing attention from policymakers is instead tied to efforts by some Chinese labs to bypass intended API limits and extract additional reasoning data that is especially useful for training.

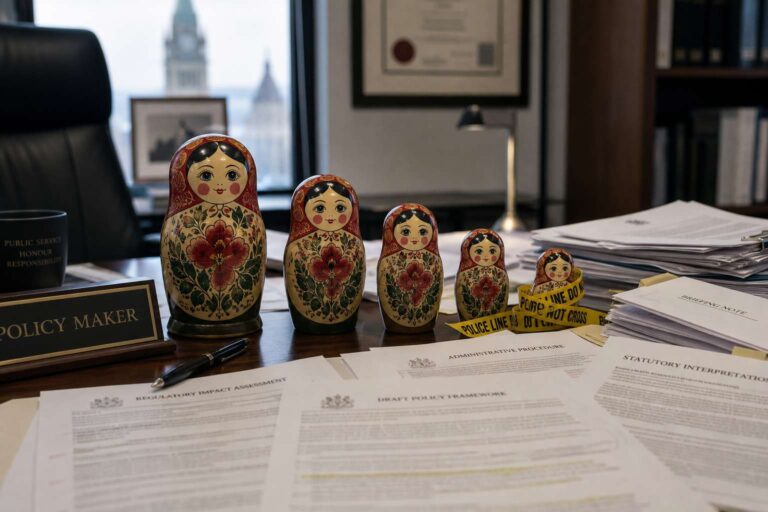

The warning is that public and regulatory discourse is moving too quickly toward legal and policy responses that could sweep far beyond API abuse. The discussion has already produced movement in Congress, executive branch pressure, and oversight aimed at U.S. companies building on Chinese models. A broad crackdown could create legal and compliance burdens that effectively sideline Chinese open-weight models and damage the wider U.S. open ecosystem. The ecosystem here could be made permanently irrelevant with the removal of nearly all Chinese open-weight models. There is no immediate substitute and building new models with meaningful community adoption has a lead time measured in 6+ months. The preferred framing is to treat the behavior at issue as jailbreaking or abuse, while preserving distillation as a legitimate term and technique across the Artificial Intelligence field.