Millions of people around the world query large language models for information, and the study argues that the models are not shaped only by developers and product policies. Through six studies, the researchers find that government control of media already affects model outputs through the text used in pretraining. In a cross-national audit, large language models showed a stronger pro-government valence in the languages of countries with lower media freedom than in those with higher media freedom, suggesting that media environments leave detectable traces in model behavior.

To examine the mechanism more directly, the research focuses on China’s media system. The team reports that media scripted and curated by the Chinese state appears in large language model training datasets. They then used an open-weight model to test the effect of additional pretraining on Chinese state-coordinated media, finding that it generated more positive answers to prompts about Chinese political institutions and leaders. The paper presents this as evidence that state-controlled information can shape downstream responses rather than simply appearing alongside other training material.

The study also connects those findings to commercial systems through bilingual audits. Prompting models in Chinese generated more positive responses about China’s institutions and leaders than asking the same questions in English. A broader language-based test found that language-exclusive countries were rated more favorably in their own language when they had lower media freedom. The researchers argue that the combination of cross-language influence and the persuasive capacity of large language models points to a strategic risk: states and other powerful institutions may have stronger incentives to use media control in hopes of influencing future model outputs.

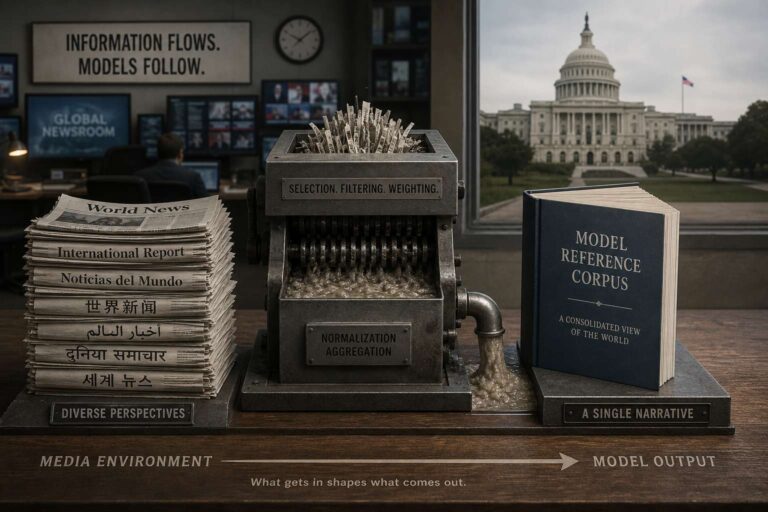

The paper positions the issue as a data governance problem as much as a model design problem. It emphasizes that political slant can enter systems through multilingual training corpora and persist into user-facing behavior, even when the models are not explicitly designed for political persuasion. The findings frame media freedom, dataset composition, and cross-lingual evaluation as central concerns for understanding how large language models represent governments, leaders, and public institutions.