A woman who had made porn videos more than a decade earlier discovered that one of her old videos had been altered to show someone else’s face on her body. The finding highlights a lesser-discussed side of sexualized deepfakes: harm to the people whose bodies are used as the foundation for explicit synthetic content. Adult content creators say Artificial Intelligence systems are training on their work, cloning their likenesses, and generating explicit material they never agreed to make, with little legal protection or meaningful control.

The consequences extend beyond consent alone. Creators describe a growing threat to their rights, livelihoods, and ownership of their own bodies as synthetic media systems repurpose existing pornography into new forms of content. Public discussion often centers on victims whose faces are inserted into sexual imagery, but the underlying performers can also lose agency over how their bodies are reused and monetized. The issue points to a broader conflict over authorship, bodily autonomy, and exploitation in the age of generative tools.

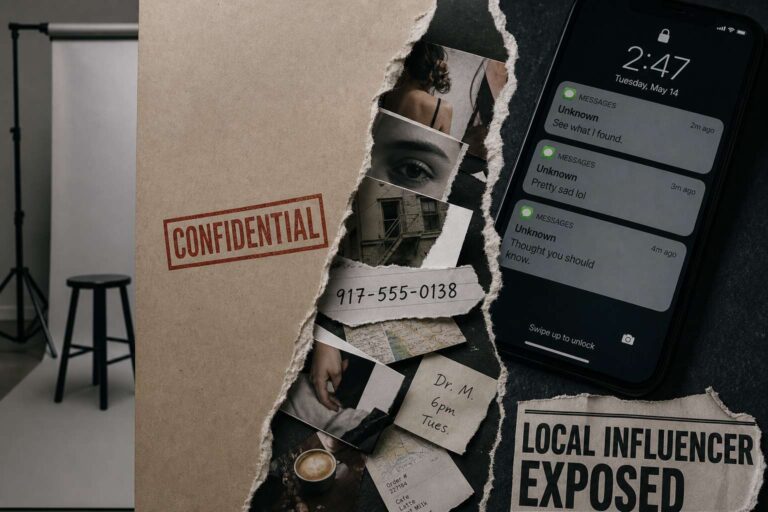

Privacy harms are also surfacing in consumer chatbots. Generative Artificial Intelligence is exposing people’s personal contact information, including real phone numbers, and there appears to be no easy way to stop it. A software developer began receiving WhatsApp messages asking for help after Gemini surfaced his number. A university researcher prompted the chatbot to reveal a colleague’s private cell number. A Reddit user said Gemini directed a stream of callers looking for lawyers to his phone.

Experts believe these privacy lapses stem from personally identifiable information embedded in Artificial Intelligence training data. Chatbots may now be making that information dramatically easier to find by presenting it directly in response to user prompts. That changes the practical risk of exposure: information that may once have been difficult to locate can be surfaced instantly and at scale. Victims of these disclosures face a growing problem with few clear remedies, as the systems continue to retrieve and redistribute sensitive personal data.