Banks, insurers and financial intermediaries have long used algorithmic decision-making in areas such as credit scoring and actuarial pricing, but the EU Artificial Intelligence Act adds a new horizontal compliance layer to those established practices. The EU Artificial Intelligence Act entered into force on 1 August 2024, and its provisions are being phased in progressively. It creates a risk-based framework across sectors, with prohibited practices, high-risk systems, limited-risk transparency duties and minimal-risk systems subject mainly to voluntary codes. In financial services, the distinction between provider and deployer is especially important because institutions that build and use their own systems may carry the full set of obligations, while those using third-party tools typically face deployer duties tied to oversight, monitoring, logging, incident reporting and fundamental rights impact assessments.

Annex III of the EU Artificial Intelligence Act classifies as high-risk certain systems used for evaluating the creditworthiness of natural persons or establishing their credit score, except financial fraud detection, as well as systems used for risk assessment and pricing in relation to natural persons in life and health insurance. Compliance questions also turn on whether a model falls within the Act’s definition of an Artificial Intelligence system. The European Commission’s July 2025 guidance clarified that simpler traditional statistical models and rule-based systems are not meant to be captured, which is significant for a sector that has used statistical methods at scale for decades. Even so, the boundary remains fact-specific, especially where statistical techniques are combined with automated inference, adaptive elements or decision-making functions, leaving institutions to make cautious compliance judgments.

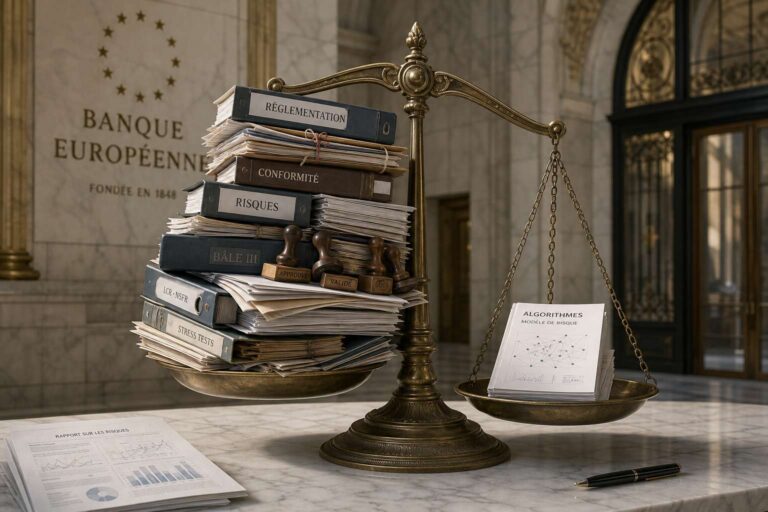

The heaviest burden comes from regulatory overlap. The EBA’s November 2025 mapping exercise concluded that there are no significant contradictions between the EU Artificial Intelligence Act and existing banking and payments legislation, but it also found that the frameworks do not integrate seamlessly. Requirements under prudential rules may help institutions reuse some model risk and information and communications technology controls, yet separate work is still needed for human oversight, data governance, cybersecurity, bias testing and evidence of compliance. The GDPR adds another layer by restricting some automated decision-making, requiring meaningful information for individuals, and imposing a data protection impact assessment, while the EU Artificial Intelligence Act requires a fundamental rights impact assessment. Different national regulators may enforce these frameworks in parallel, increasing fragmentation and uncertainty.

The European Commission’s Digital Omnibus Package, published in November 2025, proposes amendments to both the EU Artificial Intelligence Act and the GDPR. Recent negotiating texts suggest a move towards fixed application dates, with high‑risk AI systems under Annex III expected to become subject to the core obligations in late 2027, and product‑embedded systems at a later stage. The package also proposes changes intended to facilitate data use for Artificial Intelligence development, including clarifying the use of legitimate interest under the GDPR and easing some conditions around special category data for bias detection, though the final shape of these measures remains unsettled. Market surveillance in financial services would still remain primarily with national financial supervisors, even if the AI Office takes on a stronger coordinating role.

Financial institutions are being pushed to act before the framework fully settles. Every Artificial Intelligence system currently in use must be mapped against the EU Artificial Intelligence Act’s risk categories, and governance, contracts and internal controls should be adapted to cover both provider and deployer obligations. Coordination across legal, compliance, data and risk teams will be necessary, alongside ongoing monitoring of developments from European Union bodies and national supervisors. Even if the Digital Omnibus provides targeted relief, early and flexible governance is positioned as the most practical response to a regulatory environment that is likely to remain layered and only partially harmonised.