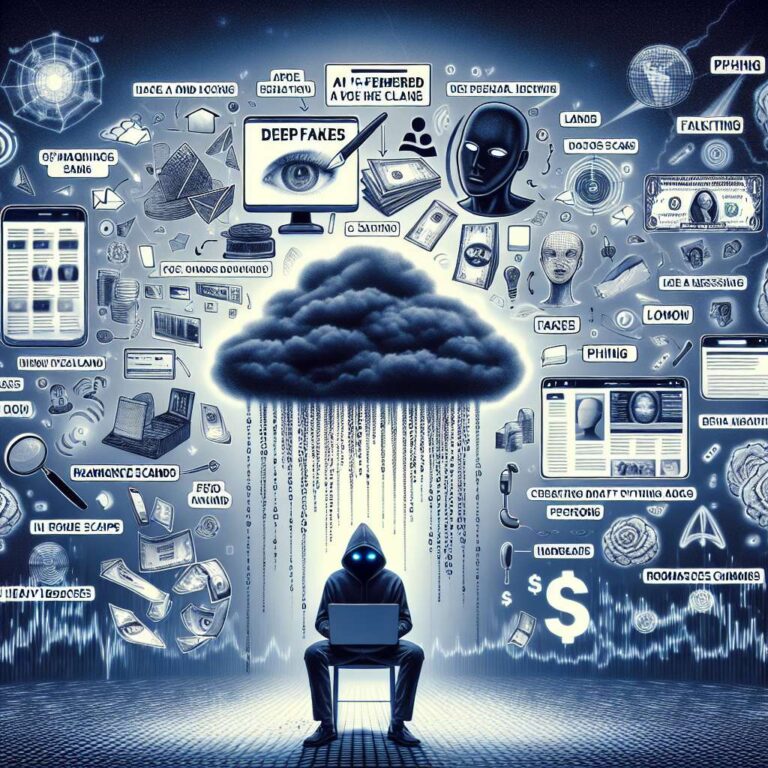

Artificial Intelligence tools are reshaping fraud in New Zealand, with criminals using voice cloning, deepfakes, and automated website creation to intensify long-standing scams across voice, video, web, and messaging channels. Cybersecurity firm Norton says Artificial Intelligence and deepfake technology are being applied to impersonation, phishing, romance fraud, and investment schemes that now target both individuals and organisations. The company highlights five key Artificial Intelligence enabled fraud types in 2025: voice cloning, AI-built phishing websites, AI-assisted romance scams, business email compromise using synthetic media, and fake celebrity endorsement schemes. Norton notes that hundreds of thousands of Artificial Intelligence generated scam sites have appeared globally this year, and NCSC figures show direct scam and fraud losses of 5.7 million in New Zealand in the most recent quarter, a trend that is increasingly relevant to cyber, crime, and professional liability insurance portfolios.

Voice cloning has become a prominent threat as widely available tools can recreate a person’s voice from only a short audio sample, allowing scammers to pose as relatives, colleagues, or bank staff and apply pressure for urgent financial actions. Citing BNZ, Norton says voice cloning is now regarded as one of the main Artificial Intelligence related scam concerns in New Zealand, as callers may closely reproduce the voices of trusted individuals and undermine informal voice-based checks. At the organisational level, traditional business email compromise is evolving into a multi-channel risk where spoofed emails are combined with Artificial Intelligence generated audio and sometimes synthetic video, trained on public recordings of senior executives. Norton referenced a reported incident at advertising group WPP in which a cloned CEO voice was allegedly used during a video-style call to seek credentials and fund movement authorisation, illustrating how converged email, voice, and video can make fraudulent instructions harder for staff to challenge and raising underwriting questions about payment verification and executive impersonation controls.

On the consumer and SME front, Norton reports an uptick in phishing sites produced with Artificial Intelligence based website tools that mimic banks, delivery firms, and major technology brands, complete with familiar layouts and customer support features. According to Norton, New Zealand has recorded a 416% increase in web skimming attempts this year, and the firm observes hundreds of new malicious Artificial Intelligence generated sites emerging globally each day, often using small URL and brand variations to fool users. Romance and friendship scams are also being reshaped, with Artificial Intelligence chatbots sustaining long-running conversations and deepfake or heavily edited images used as false identity proof. Avast researchers, cited by Norton, found that sextortion scams in New Zealand rose by 137% in early 2025, with attackers using Artificial Intelligence generated deepfake material and breached personal data to threaten exposure unless victims pay. These incidents sit within a broader cyber landscape detailed in the NCSC Cyber Security Insights report for April 1 to June 30, 2025, which recorded 1,315 cyber security incidents, including 514 scams and fraud events and 374 phishing and credential harvesting cases, with direct financial losses of 5.7 million for the quarter, down from 7.8 million, and incidents involving losses of 10,000 or more representing 5.3 million, or 94% of total reported loss, across 50 cases.