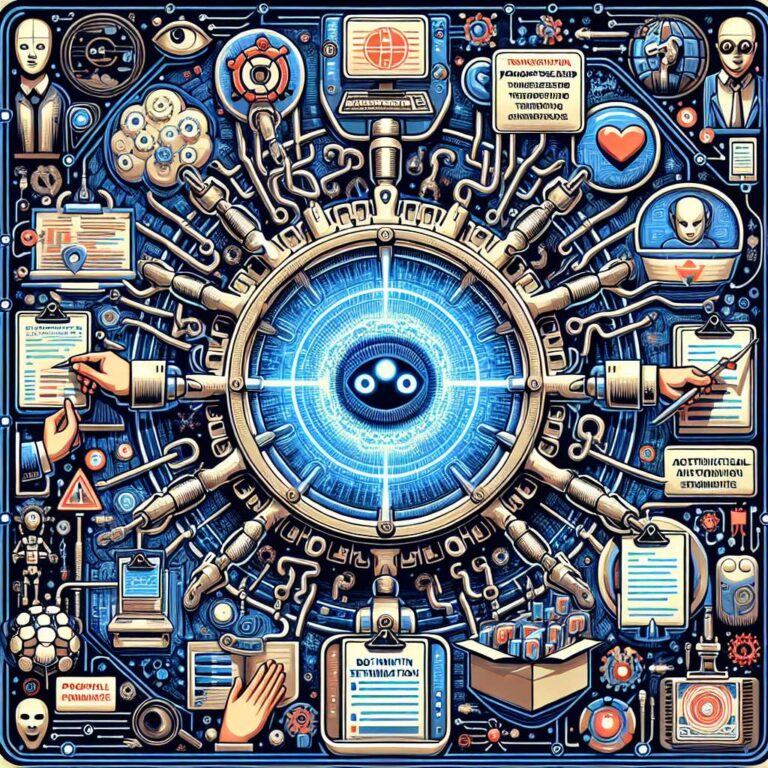

Social engineering techniques are beginning to converge with advances in artificial intelligence and large language models, creating a new class of threats that target automated systems as much as human users. Instead of focusing solely on deceiving people through phishing emails or fraudulent messages, malicious actors are starting to explore how carefully crafted prompts can influence artificial intelligence behavior and outcomes. This evolution reframes social engineering as an attack on the interaction layer between humans and intelligent systems, where prompts become the primary vehicle for manipulation.

As organizations adopt large language models for specific tasks, such as customer support, coding assistance, or document summarization, each deployment presents a distinct attack surface. Carefully designed malicious prompts can attempt to override safeguards, exfiltrate sensitive information, or induce the system to perform actions outside its intended purpose. In this context, the prompt itself functions like a phishing message, but the target is the artificial intelligence driven agent rather than a person reading an email. The combination of automated decision making and natural language interfaces amplifies both the potential productivity benefits and the risks of subtle, hard to detect manipulation.

Defending against this emerging category of attacks requires security practices that treat prompts, model configurations, and workflow integrations as critical assets. Traditional awareness training that teaches employees to spot suspicious messages must be complemented by controls that constrain what artificial intelligence systems can access and how they respond to unexpected instructions. Governance over model usage, monitoring for anomalous outputs, and clear boundaries on data exposure become central to limiting damage from prompt based social engineering. As malicious experimentation grows, security teams will need to adapt threat models to include not only humans deceived by phishing, but also intelligent systems persuaded by adversarial prompts.