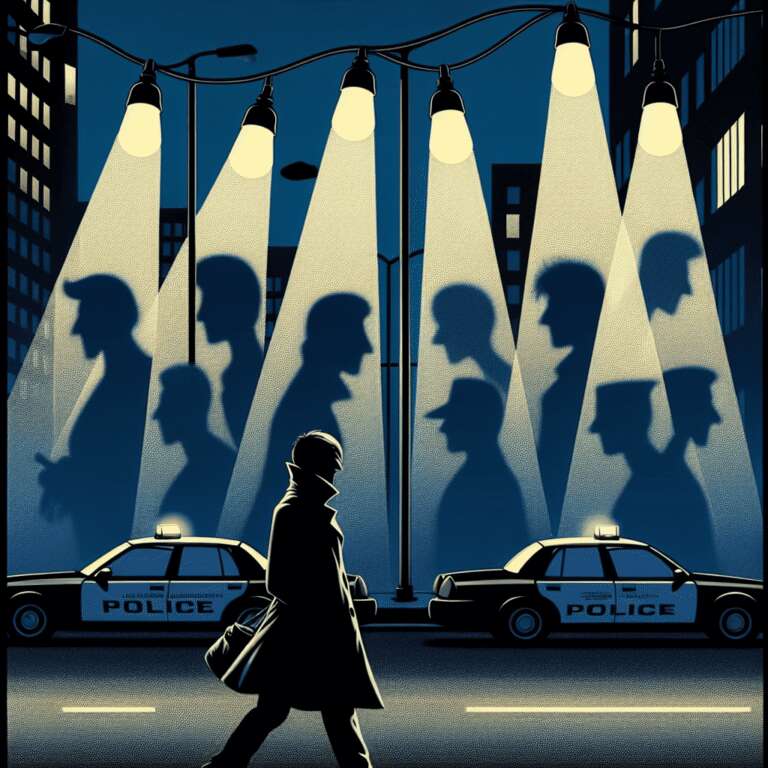

Police departments and federal agencies in the United States are increasingly leveraging a new Artificial Intelligence surveillance tool that identifies individuals not by their faces, but by physical attributes such as body size, gender, hair color and style, clothing, and accessories. This approach enables law enforcement to sidestep the growing number of legal restrictions on facial recognition technology. According to advocates from the ACLU, this development marks the first widespread use of such tracking systems in the US, with significant potential for abuse, particularly in the context of heightened calls for monitoring protesters, immigrants, and students.

The rapid adoption of Artificial Intelligence within policing is driven by more than 18,000 independent police departments across the country, who enjoy significant autonomy in determining which technologies to acquire. Companies like Flock and Axon provide comprehensive sensor suites—ranging from cameras to gunshot detectors and drones—alongside Artificial Intelligence tools that analyze the large volumes of data these devices collect. Police departments cite improved efficiency, reduced officer workload, and faster response times as primary motivators for investing in these technologies. However, these advancements present complex challenges related to transparency, oversight, and trust between law enforcement and the communities they serve.

Controversies have already emerged over police deployment of Artificial Intelligence-driven devices, such as drones in Chula Vista, California, which, though occasionally successful in emergencies, have sparked lawsuits and privacy concerns, especially among residents in poorer neighborhoods. Critics, including the ACLU´s Jay Stanley, highlight the inadequacy of current regulations, noting the absence of federal oversight and a tendency for departments to implement tools first and seek community feedback later, if at all. Crucially, these new tracking systems, by avoiding biometric data, often elude existing scrutiny and restrictions. Calls are mounting for public hearings, independent audits, and clear usage guidelines before further adoption of Artificial Intelligence in policing proceeds, as the pace of technological change increasingly outstrips the development of meaningful policy safeguards.