NVIDIA presented GTC as a milestone for physical Artificial Intelligence, with robots, vehicles and factories moving beyond single use cases and isolated deployments into broader enterprise workloads. The company highlighted frontier models including NVIDIA Cosmos 3, NVIDIA Isaac GR00T N1.7 and NVIDIA Alpamayo 1.5, alongside new infrastructure intended to support world modeling, humanoid skills and autonomous driving. OpenUSD sits at the center of that strategy as a common scene-description language for combining CAD data, simulation assets and real-world telemetry in a shared, physically accurate environment.

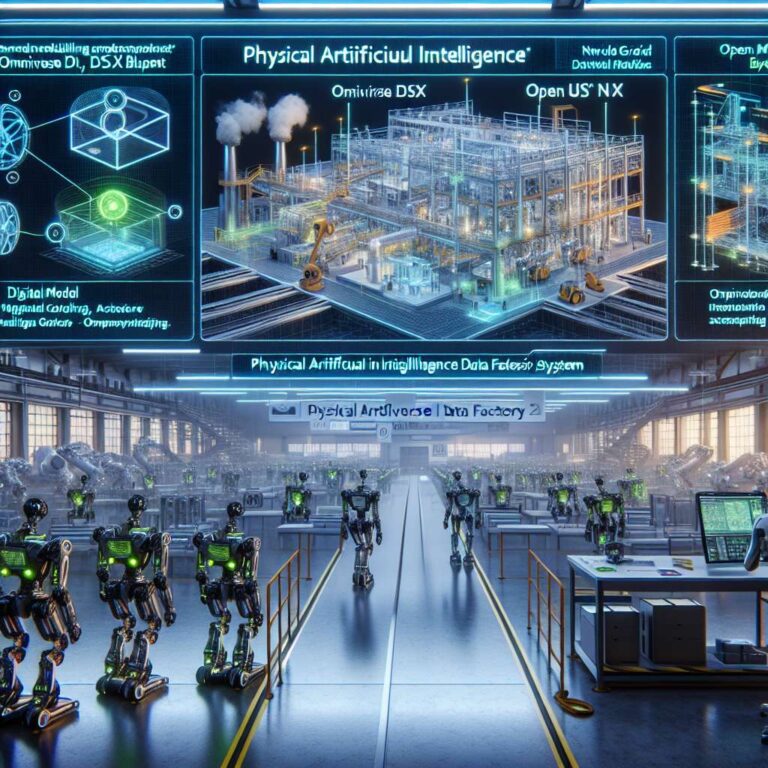

A major focus was simulation for industrial systems before they are built or deployed. NVIDIA introduced the Omniverse DSX Blueprint as a reference architecture for creating a single digital twin across an Artificial Intelligence factory, covering thermals, power grids, network load and mechanical systems. NVIDIA also introduced its Physical Artificial Intelligence Data Factory Blueprint, an open reference architecture built on NVIDIA Cosmos open world foundation models and the NVIDIA OSMO operator. The system unifies data curation, augmentation and evaluation into a single pipeline, enabling developers to create diverse, long-tail datasets from limited real-world inputs. Microsoft Azure and Nebius are the first cloud platforms to offer the blueprint.

NVIDIA framed compute as the new engine for producing training data, arguing that real-world data alone no longer scales for physical Artificial Intelligence. Open source agentic frameworks such as OpenClaw extend that stack into operations by running long-lived workflows with tools, memory and messaging interfaces. On the design side, NVIDIA emphasized CAD-to-OpenUSD pipelines using Omniverse Kit and Isaac Sim to turn engineering data into simulation-ready assets for real-time rendering, testing and collaboration. FANUC and Fauna Robotics are using this workflow to accelerate robotic system design and validation.

The company also linked digital twins directly to manufacturing and logistics deployment. The NVIDIA Mega Omniverse Blueprint is positioned as a way to design, test and optimize robot fleets and Artificial Intelligence agents in facility-scale digital twins before deployment. KION, with Accenture and Siemens, is using the blueprint to build warehouse digital twins for GXO and train NVIDIA Jetson-based autonomous forklifts. NVIDIA said ABB Robotics, FANUC, KUKA and Yaskawa, which have a combined global install base of over 2 million robots, are using Omniverse libraries and Isaac simulation frameworks to validate applications and production lines through digital twins, while integrating NVIDIA Jetson modules for real-time Artificial Intelligence inference. FieldAI, Skild AI and Generalist AI were also highlighted for using NVIDIA Cosmos and Isaac-based simulation to develop robot brains and synthetic data workflows.