Nvidia introduced a set of local artificial intelligence video generation tools at GDC designed for concept development and storyboarding workflows running on Nvidia RTX GPUs and the DGX Spark artificial intelligence development desktop. The updates focus on enabling artists and developers to keep more of their video generation and refinement process on local hardware while using existing RTX resources more efficiently.

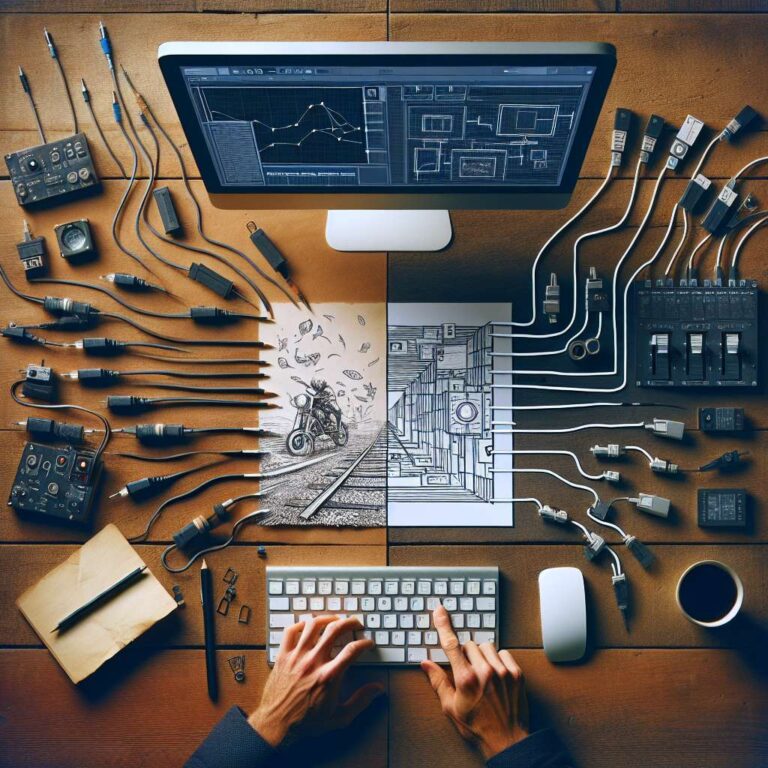

ComfyUI, a widely used generative artificial intelligence tool known for its powerful but complex node based interface, is gaining a significant accessibility improvement. To help artists who are unfamiliar with visual programming concepts such as node graphs, ComfyUI introduced App View, a simplified mode that allows content generation by entering a text prompt and adjusting a few core parameters. The traditional workspace remains available as Node View, and users can switch between App View and Node View so they can choose either approachable controls or granular tuning depending on the task.

The push toward local workflows also addresses the technical challenges of generating high quality 4K Ultra HD video on a single machine. Generating high-quality 4K Ultra HD video locally entails balancing three constraints, generation speed, video memory limits, and overall control. Artists typically generate smaller, faster previews before upscaling them, a process that traditionally took minutes for just a 10-second clip. To accelerate this pipeline, Nvidia released RTX Video Super Resolution as a node from directly within ComfyUI, enabling rapid upscaling to 4K. Artificial intelligence developers can access this upscaling technology through a free Python package available via the PyPI repository. The tool takes advantage of Tensor cores on GeForce RTX GPUs, tying the new software capabilities tightly to the company’s existing consumer and professional graphics hardware.