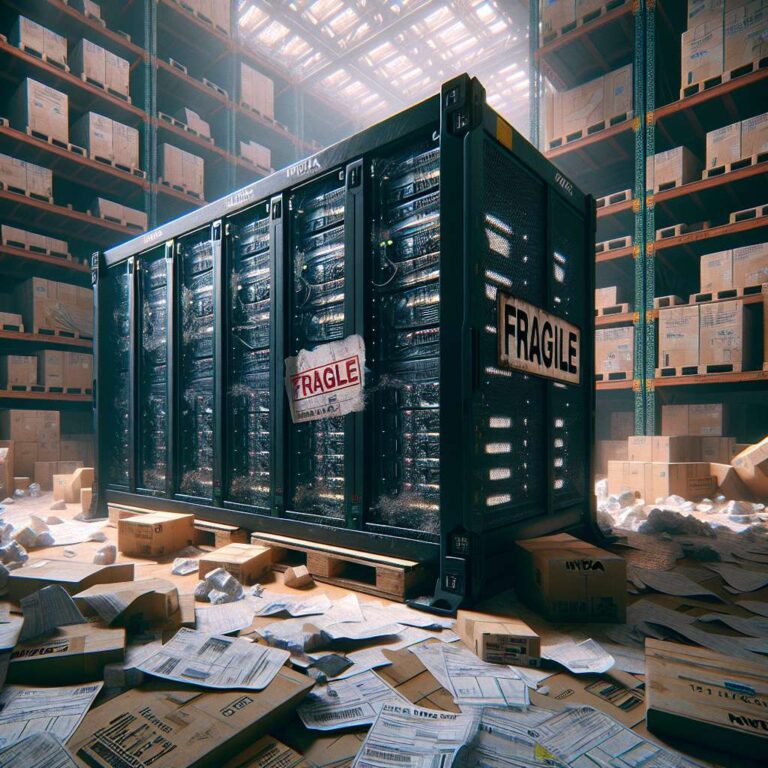

Nvidia´s flagship artificial intelligence servers, including the advanced GB200 Grace Blackwell systems, are reportedly appearing in Chinese markets despite strict US export restrictions. The Financial Times uncovered multiple sales contracts and filings indicating a robust black market for these servers. Distributors exploit loopholes and grey channels, some involving intermediary hubs like Singapore, to supply high-end Nvidia hardware to various provinces in China, such as Anhui for models like the B200, H100, and H200.

The US government, particularly under the Trump administration, has intensified efforts to curb the flow of American artificial intelligence hardware into China, citing concerns about national security and technological competition. Despite these measures, Nvidia equipment valued at over a billion dollars has managed to circumvent export rules. Many devices are relabeled under brands like Supermicro (SMCI) to further mask their origin, and listings for such hardware are openly found on popular Chinese retail platforms. Some vendors have even gone as far as offering live demonstrations of working hardware to assure buyers of authenticity and capability.

While the volume of GB200 clusters sold through these backchannels remains minor compared to the world’s largest artificial intelligence clusters, the hardware is more than adequate for the needs of low- and mid-tier Chinese cloud service providers. The persistent availability of these restricted systems underscores ongoing vulnerabilities in supply chain enforcement. The US response—whether in further patching export loopholes or tightening scrutiny over distributors linked to suspected rerouting—remains to be seen as China continues to procure high-performance artificial intelligence compute resources through unconventional avenues.