Yann LeCun, credited with founding several core neural network methods and serving as chief Artificial Intelligence scientist at Meta for decades, is reported to be at odds with the industry’s fixation on large language models. After a 40-year career shaping modern machine learning, he now finds himself increasingly sidelined at Meta as the company commits to scaling language models. Reports cited in the article say he may leave Meta to launch a startup focused on “world models,” a different research path he views as the truer route to more capable and reliable Artificial Intelligence systems.

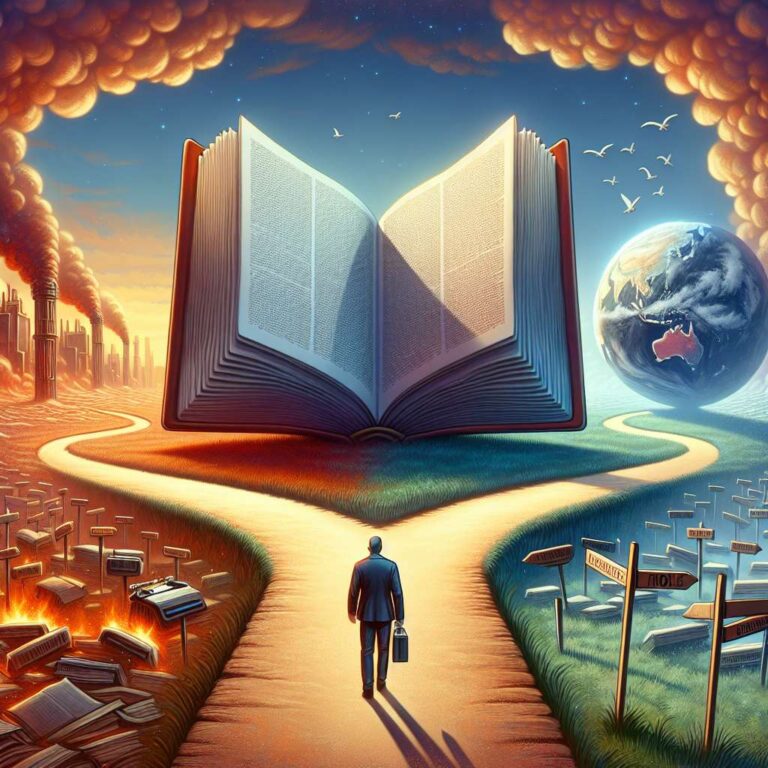

LeCun’s critique centers on the limits of language-only systems. He argues that large language models lack fundamental reasoning, genuine understanding, and grounding in the physical world. His alternative vision emphasizes models that predict and interact with environments, effectively learning to model the world rather than only predicting token sequences. That perspective revives long-standing debates about whether language models alone can produce human-level intelligence or whether architectures that incorporate environment learning and prediction are necessary.

The disagreement matters beyond academic dispute. The article highlights implications for energy use, climate modeling, scientific discovery, and environmental decision-making, noting that large language models are increasingly compute-intensive while world-model approaches could, in theory, be more efficient and provide more trustworthy outputs for sustainability and risk forecasting. Meta’s continued investment in language models creates a clear philosophical divide. If LeCun departs, his startup could become a new pole in the Artificial Intelligence landscape, attracting researchers disillusioned with the language-model arms race and intensifying competition and debate over whether future leaps will come from ever-bigger models or smarter architectures. As one report put it, he has become “the odd man out at Meta, even as one of the godfathers of AI.”