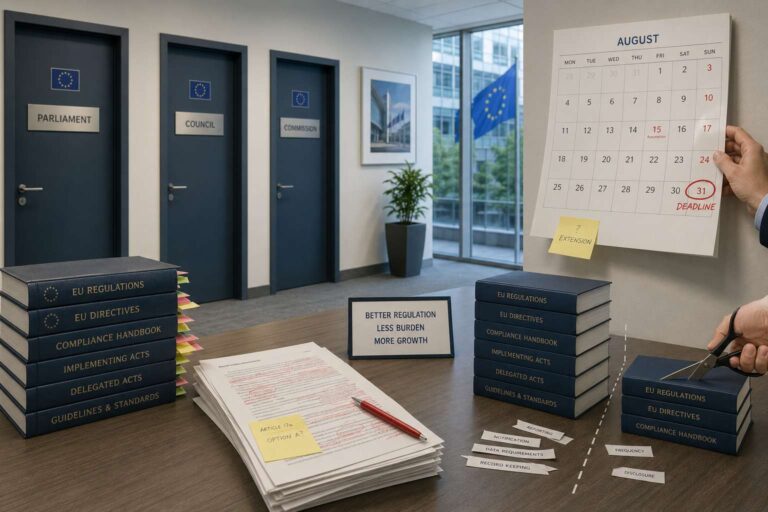

Trilogue negotiations between the European Parliament, the Council of the European Union and the European Commission are now underway on the Artificial Intelligence Omnibus, a package introduced on 19 November 2025 to simplify parts of the European Union’s existing Artificial Intelligence framework. Political agreement could be reached as early as 28 April 2026, with formal adoption expected by July, just ahead of the 2 August 2026 deadline for the implementation of the EU Artificial Intelligence Act’s obligations for high-risk Artificial Intelligence systems. That compressed schedule reflects pressure to clarify the rules before the remaining provisions of the law begin to apply.

The clearest point of agreement is a delay to the compliance timetable for high-risk Artificial Intelligence systems. Both the Council and Parliament support postponing the original 2 August 2026 deadline under the EU Artificial Intelligence Act to: 2 December 2027 for stand-alone high-risk Artificial Intelligence systems classified under Annex III, and 2 August 2028 for Artificial Intelligence embedded in regulated products under Annex I. The change responds to industry concerns about gaps in harmonised standards, conformity assessment infrastructure and regulatory guidance. The extra time is intended to help organisations complete risk classification exercises, establish governance frameworks, and prepare technical documentation and monitoring systems.

Both institutions also back a targeted prohibition on so-called nudifier applications. The proposed ban covers Artificial Intelligence-enabled tools that alter, manipulate or artificially generate realistic images or videos to depict sexually explicit activities or the intimate parts of an identifiable person without consent. The approach extends beyond purpose-built products to systems where that misuse is reasonably foreseeable from the system’s functionality. That would push organisations to build guardrails into design and deployment, carry out risk and impact assessments, and monitor for harmful use cases that could infringe individual rights and freedoms.

Another area of alignment is proportionality for smaller businesses. The Parliament and Council support extending measures currently available to small and medium-sized enterprises to small mid-cap enterprises as well. Recent policy discussions suggest that SMCs may be defined by reference to ceilings of up to 750 employees and €150 million in annual turnover. The aim is to scale compliance expectations to organisational size through simplified guidance, reduced fees and access to tools such as regulatory sandboxes and standardised documentation templates, without removing the underlying legal duties.

Important disagreements remain. The Parliament wants to narrow the definition of high-risk by making clear that features used only for assistance, optimisation, efficiency, automation or convenience should not count as safety functions unless failure would create actual safety risks, while the Council has not proposed a matching limit. The two sides also diverge on Artificial Intelligence literacy, with the Council favoring a non-binding encouragement model and the Parliament retaining a binding duty to support improvements among staff. Another unresolved issue is whether systems meeting the Cyber Resilience Act’s essential cybersecurity requirements should be presumed compliant with Article 15 of the EU Artificial Intelligence Act. If talks slip, the original rules, including the current 2 August 2026 timeline, will still take effect.