The U.S. General Services Administration has drafted a new contract clause, “Basic Safeguarding of Artificial Intelligence Systems,” that would set standards for the use of Artificial Intelligence in federal procurement. The proposal covers data management, access to government data, breach reporting, testing methods, and system updates. Business advocacy groups argue that parts of the language could reduce competition, raise costs to the government, and discourage providers from participating in the federal market.

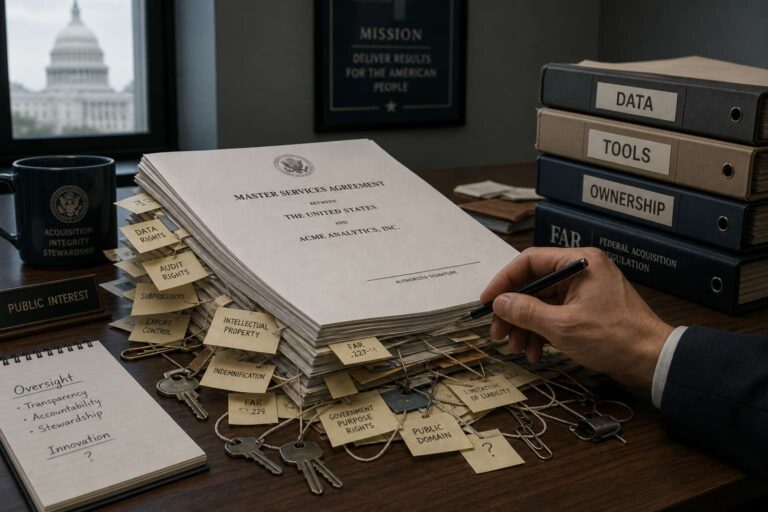

A central concern is the clause’s treatment of intellectual property. The draft defines custom development broadly to include modifications, customizations, configurations, enhancements, associated implementations, workflows, and related deliverables created for the government under a contract. For Artificial Intelligence contracts, the government would retain ownership of government data as well as all custom developments. The U.S. Chamber of Commerce warned that this language could be read to give the government ownership over fine-tuned models, configurations, and enhancements derived from a contractor’s proprietary technology and pre-existing intellectual property.

The proposal also uses expansive definitions for “Artificial Intelligence systems” and “Artificial Intelligence service” providers. Its scope would extend beyond widely recognized Artificial Intelligence products such as large language models and generative Artificial Intelligence to include back-end infrastructure, security monitoring tools, and machine learning functions embedded in standard commercial software. That breadth could create compliance uncertainty and pull in a wide range of commercial offerings that were not the intended target of the regulation.

Reporting obligations are another major issue. Contractors would be required to disclose all Artificial Intelligence systems used in performing the contract, including possible modifications. This could force businesses to report and track Artificial Intelligence within common off-the-shelf business tools, including software with incidental or embedded Artificial Intelligence features that may be difficult to identify. The clause also places sole responsibility for compliance by all service providers on the prime contractor, including systems the contractor does not own or operate, expanding liability beyond established commercial practices.

The draft further bars the use of foreign Artificial Intelligence systems by requiring that all tools and components be developed, manufactured, and controlled by U.S.-based entities. While aimed at promoting domestic technology, critics say the wording is unclear and could unintentionally limit the use of open-source technologies, globally developed components, and common commercial tools used in research and development. Business groups are pressing the GSA to narrow key definitions, align the clause with existing commercial frameworks, and make clear that contractors keep rights to their pre-existing intellectual property.