Pittsburg Plate Glass Industries, a 140-year-old global business, illustrates how legacy complexity can undermine artificial intelligence initiatives without strong data foundations. The company operates in multiple countries and had accumulated a wide variety of data, but struggled with basic data literacy and discoverability across its operations, which limited its ability to scale artificial intelligence productivity goals. PPG senior IT manager of data and analytics Bob Howden described issues including poor discoverability, unclear data product ownership, gaps in data lineage and the presence of “170 sources in our database,” alongside a disconnected metadata system that made it difficult to accelerate day-to-day artificial intelligence work or extract return on data investments in 2026. To begin resolving these challenges and create a more coherent data environment, PPG turned to cataloging vendor Atlan.

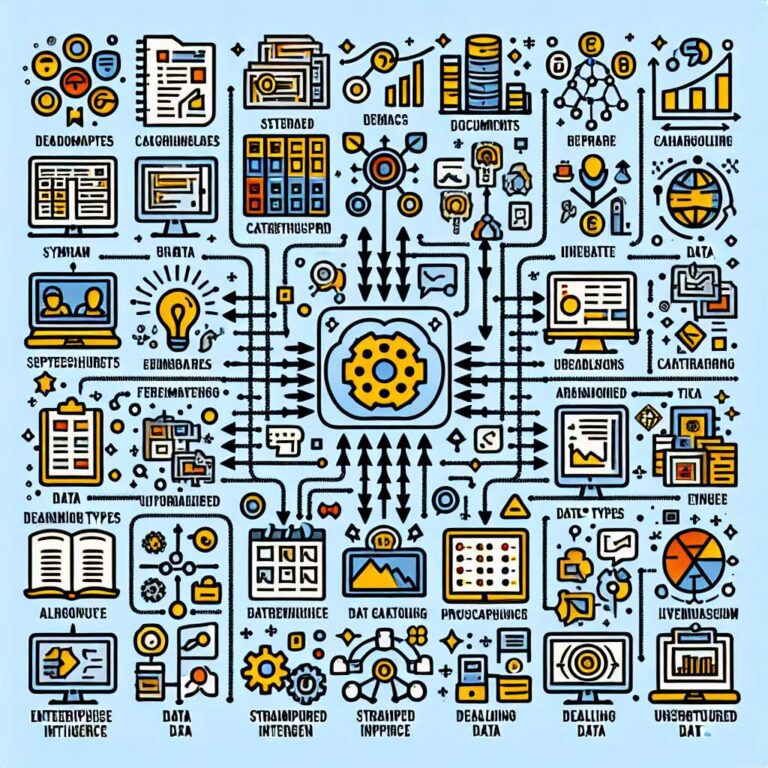

The experience is increasingly common among enterprises looking to move beyond pilots and deploy artificial intelligence systems and agents in production. Leaders are concluding that a robust data environment is a prerequisite and that data must be enriched with business context that models can interpret. Howden noted that the core challenge has shifted from basic data inaccuracy to understanding information within a broader context so that artificial intelligence systems can produce solid answers. Deloitte Global artificial intelligence institute lead Beena Ammanath linked this shift to changing data requirements, explaining that newer versions of artificial intelligence need either streaming data or unstructured data and more access to data. She differentiated structured data that fits easily into spreadsheets from unstructured data such as images and written documents and said, “You need unstructured data to make your AI robust. AI and generative AI need more varieties of data.”

Context alone is not sufficient without alignment between structured and unstructured sources, according to Saravanan Balasubramaniam, senior vice president of artificial intelligence and data delivery at Truist Financial. He warned that if a bank such as Truist provides two different answers to the same client for the same question, inconsistency can invite legal action, and he stressed that documents pulled to answer customer queries must be clear, clean and subject to identity resolution so that, for example, the same customer is not represented as William in one place and Will in another. Without this kind of alignment and identity resolution, he said, data quality problems will recur inside artificial intelligence systems and agents. Paul Bell, global head of data trust and integrity at sports betting and gambling company Entain, argued that when enterprises do provide an artificial intelligence agent with high-quality, well synchronized structured and unstructured data, with the right context, models can perceive and process that context faster and more effectively than humans, strengthening the business case for disciplined data governance.