The competitive center of the Artificial Intelligence market is moving away from standalone model performance and toward platform control. OpenAI, Google, Anthropic, Amazon, and Meta are building ecosystems that combine developer communities, agent frameworks, marketplaces, enterprise integrations, and governance layers. The central argument is that selecting a large language model is no longer a narrow technical choice. It now carries long term consequences for workflow design, switching costs, data strategy, and enterprise architecture.

The shift follows a familiar pattern from earlier technology eras, where tools first competed on capability and then consolidated into dominant platforms. Artificial Intelligence is described as entering that consolidation phase, with switching becoming harder as organizations build internal workflows and applications inside expanding ecosystems. Anthropic introduced a marketplace around Claude. OpenAI continues embedding Artificial Intelligence into enterprise workflows and productivity tools. Google is integrating Gemini across search, cloud infrastructure, and enterprise software. Amazon is weaving Artificial Intelligence into infrastructure and commerce layers. These moves point to a market where models serve as the foundation of wider platforms designed to create structural dependence over time.

The most important competitive advantage is framed as ecosystem gravity, the ability of a platform to attract developers, partners, and applications until it begins to function like infrastructure rather than a vendor. That changes how enterprises should evaluate providers. Benchmark tests, prompt quality, and pricing remain useful, but they are no longer sufficient on their own. Choosing a large language model now also means choosing a developer ecosystem, an application marketplace, an agent architecture, a governance framework, and an innovation roadmap. The decision is compared to selecting a cloud provider or ERP platform, making board level oversight essential.

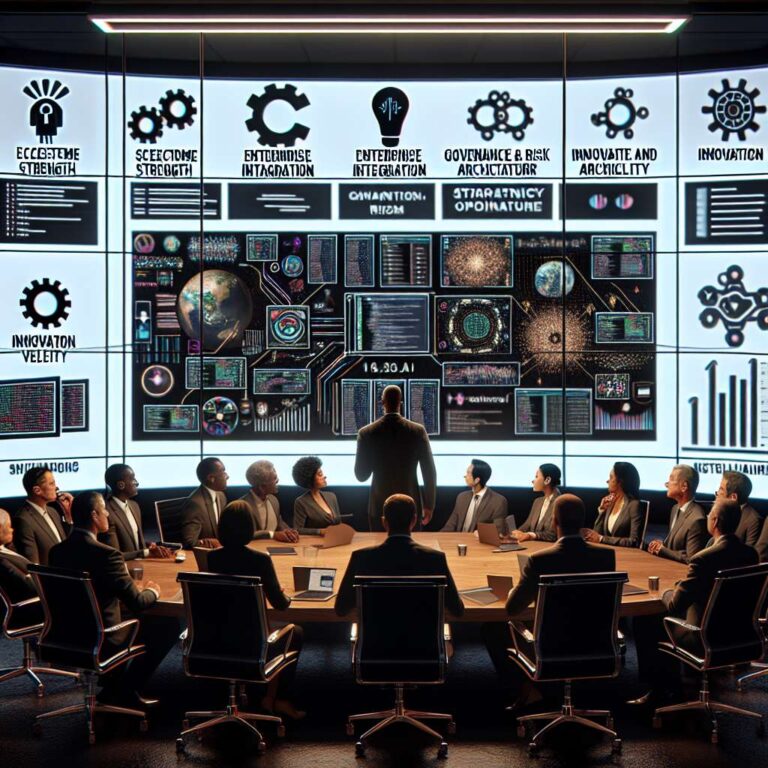

A five part evaluation framework is proposed for leadership teams. Ecosystem strength measures the size and vitality of the developer base. Enterprise integration examines how easily a platform connects with data systems, productivity tools, cloud infrastructure, and business applications. Governance and risk architecture focuses on safety, transparency, data control, and regulatory alignment. Innovation velocity considers how quickly ecosystems introduce new models, new tools, and enterprise integrations. Strategic optionality is presented as the most important factor, emphasizing the need to preserve flexibility across multiple ecosystems instead of becoming fully dependent on one provider.

Boards are urged to ask management which Artificial Intelligence ecosystem is shaping enterprise architecture, what dependencies are forming around a single platform, how flexibility across multiple model providers is being preserved, and whether governance frameworks can support Artificial Intelligence at scale. Claude and Gemini are presented as increasingly enterprise grade options, with Claude associated with trust and governance and Gemini associated with scale and integration. The likely outcome is a multi platform environment rather than a single winner, rewarding organizations that make deliberate platform choices early.