Researchers in South Korea have developed a method designed to make Artificial Intelligence models acknowledge unfamiliar topics instead of answering with unjustified certainty. The work targets a longstanding problem in chatbot behavior: overconfidence when handling questions or situations outside a model’s training. Improving that ability could make Artificial Intelligence systems more dependable in settings where incorrect answers carry higher risks, including autonomous driving and medicine.

Researchers from the Korea Advanced Institute of Science and Technology say previous studies have identified Artificial Intelligence overconfidence as a major risk, especially in tasks such as medical diagnosis. Commonly used Artificial Intelligence models like OpenAI’s ChatGPT have been shown to hallucinate, producing fabricated information because they are pushed to guess rather than admit uncertainty. The researchers argue that a fundamental source of that problem lies in how models first learn from data through artificial neural networks. Small errors introduced during this early stage can spread through later training and lead to larger mistakes.

The team found that when random data was input into a neural network during the initialisation phase, the model exhibited high confidence despite not having learned anything. This led to hallucination. To address that issue, the researchers drew inspiration from human brain development. They noted that in humans, brain signals are generated without external input even before birth, helping the brain manage uncertainty.

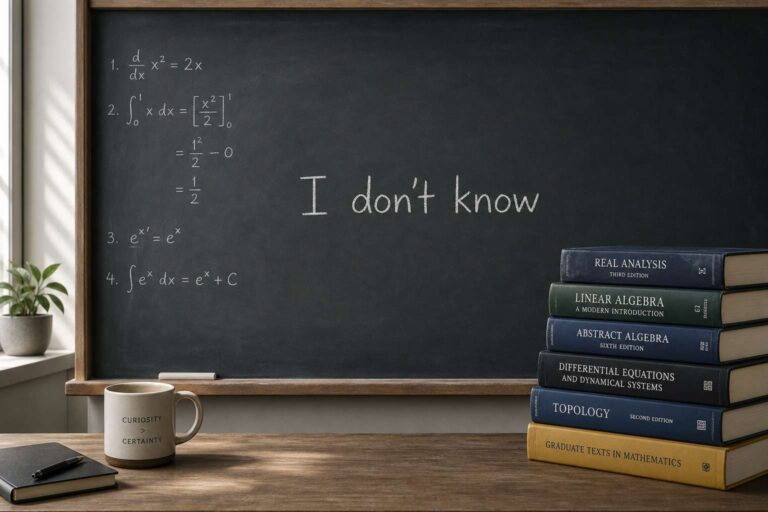

Using that idea, the scientists created a process in which the neural network backbone of an Artificial Intelligence model undergoes brief pre-training with random noise inputs before actual learning begins. According to the researchers, this warm-up stage helps the model establish a baseline by adjusting its own uncertainty in advance. The process can help an Artificial Intelligence model set its initial confidence to a low level close to chance, and significantly reduce its overconfidence bias. In practical terms, the method teaches the model to begin from a state closer to “I don’t know anything yet”.

The researchers said models using warm-up training were better able to lower confidence and recognize that they do not know when facing data not encountered during training. They described this as a step toward helping Artificial Intelligence distinguish between what it knows and what it does not know. Se-Bum Paik, an author of the study published in Nature Machine Intelligence, said the findings show that incorporating principles of brain development can help Artificial Intelligence recognize its own knowledge state in a more human-like way, improving its ability to identify uncertainty or possible mistakes rather than only increasing answer accuracy.