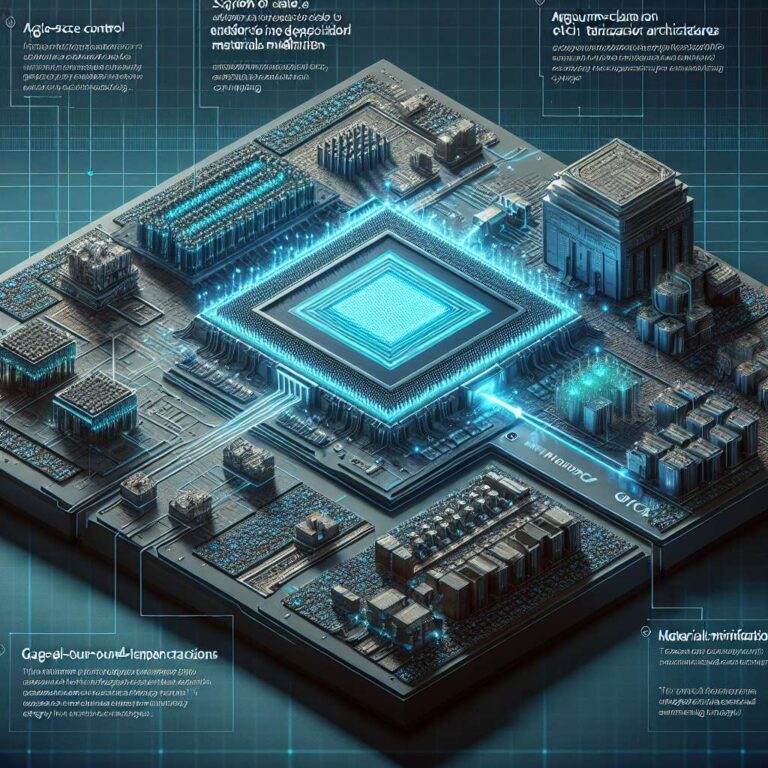

Applied Materials introduced new deposition, etch and materials modification systems that boost the performance of leading-edge logic chips at 2 nm and beyond, with the technologies aimed at supercharging Artificial Intelligence compute through atomic-scale improvements to the transistor. The focus is on refining the most fundamental electronic building block so that it can deliver higher performance while maintaining tight control at extremely small geometries.

The transition to Gate-All-Around transistors is described as a major industry inflection and a critical enabler of the energy-efficient computing needed for more powerful Artificial Intelligence chips. As 2 nm-class Gate-All-Around chips ramp to volume production this year, Applied Materials is introducing new material innovations specifically tailored to enhance next-generation Gate-All-Around transistors targeting angstrom nodes, aligning process technology with the aggressive scaling roadmap.

According to the company, the combined impact of the new chipmaking systems contributes a significant portion of the total energy-efficient performance gains of Gate-All-Around process node transitions. By targeting both transistor structures and associated materials processes, the systems are intended to improve overall logic chip capabilities for data-intensive workloads while supporting the continued evolution of energy-efficient Artificial Intelligence computing.