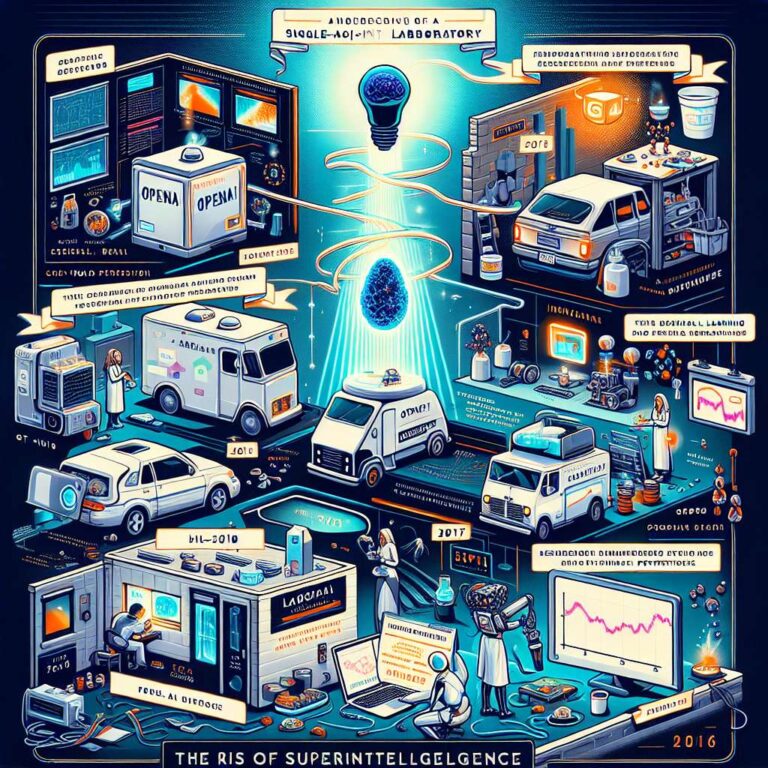

Sam Altman marks the tenth anniversary of OpenAI by reflecting on how an unlikely and “crazy” idea turned into a company that now believes it has a real chance of achieving its mission of ensuring artificial intelligence benefits all of humanity. He recalls that OpenAI announced its effort ten years ago, formally starting in early January of 2016 with about 15 people who were young, optimistic, and intensely focused despite being widely misunderstood. From the beginning, the team built a culture that prioritized discovery, tackled obstacles one by one, and balanced deep learning research with real world deployment, which helped shape a practical approach to making artificial intelligence systems safe and robust.

Altman highlights 2017 as a pivotal year, citing foundational results such as Dota 1v1 reinforcement learning breakthroughs, the unsupervised sentiment neuron that showed language models learning semantics, and reinforcement learning from human preferences that outlined an early path to aligning an artificial intelligence system with human values. Recognizing that these advances needed to be scaled with massive computational power, OpenAI kept pushing the technology forward, culminating in the launch of ChatGPT three years ago and then GPT‑4, which made artificial general intelligence feel like a serious prospect rather than a distant fantasy. He describes the last three years as intensely stressful, with the technology integrated into the world at a scale and speed that no previous technology has matched, forcing OpenAI to rapidly develop operational muscles and make hundreds of decisions a week as it grew from a small lab into a massive company.

One of the most consequential choices, according to Altman, was the controversial but ultimately influential strategy of iterative deployment, releasing early versions of powerful systems so that people could build intuitions and let society and the technology co evolve. He writes that ten years into OpenAI, they have an artificial intelligence system that can do better than most of their smartest people at their most difficult intellectual competitions, and he believes that in ten more years they are almost certain to build superintelligence. Altman expects the future to feel strange, with daily life and core human concerns changing less than people might assume, even as the capabilities of people in 2035 become hard to imagine from today’s vantage point. He closes by expressing gratitude to users and customers who placed high conviction bets on OpenAI’s early products, reiterating the mission to ensure that artificial general intelligence benefits all of humanity, and noting that while there is still a lot of work ahead, the team’s trajectory and the benefits already visible today make him deeply optimistic about what will emerge in the next few years.