Generative Artificial Intelligence has not yet produced the sweeping transformation of low-level cybercrime that many policymakers and security observers fear. Analysis of discussions across underground forums suggests that criminal communities are experimenting with chatbots, coding assistants, jailbreaks, and so-called dark Artificial Intelligence products, but most of these efforts have delivered limited practical value. Custom criminal models generated early interest, yet they were largely repackaged or jailbroken mainstream systems that could discuss illicit topics without reliably helping users commit cybercrime more effectively.

The study drew on the Cambridge Cybercrime Centre’s CrimeBB database, which stores more than 100 million messages from underground forums and channels used by cybercriminals. Covering the time period from the release of ChatGPT (November 2022) to the end of 2025, the research found that many aspiring offenders lacked the technical ability to use generative Artificial Intelligence effectively for criminal purposes. More capable users did benefit from coding assistants, but often in routine software and administrative work rather than uniquely criminal tasks. The majority of any cybercrime coding, administration or logistics task is indistinguishable from one in a legitimate business, and so generative Artificial Intelligence can be used with zero adaptation. As a result, the underground appears to be adopting the same software engineering habits already spreading in legitimate workplaces, rather than inventing many new cybercrime-specific methods.

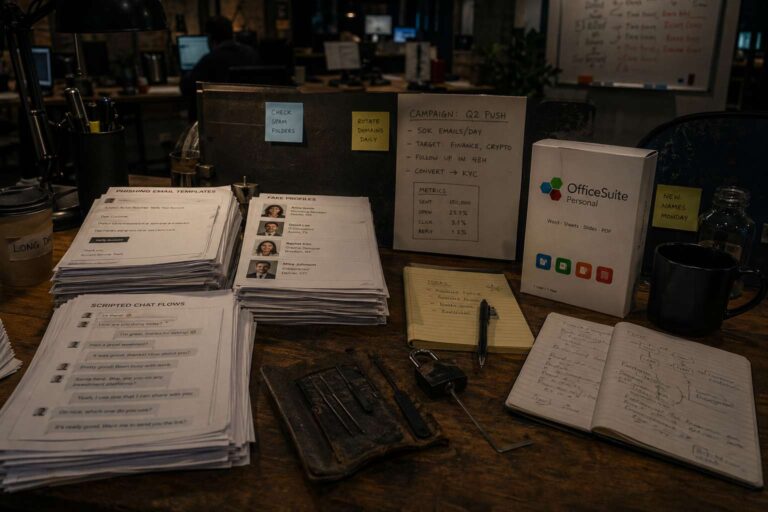

Automation remains the area with the clearest potential to change criminal operations. Forum discussions showed active use of generative Artificial Intelligence in romance fraud, online sexual encounters, image generation, and text, voice and video chat. These tools helped scale initial outreach and content production, but substantial human involvement was still required, especially in longer interactions. Users also reported saturation effects as targets became more suspicious, had already been victimised, or got better at identifying generated content. The same scaling logic applies to spam sites, fake social media presences, and search manipulation, where large language models can produce more human-like content that is harder for defenders to detect.

A growing concern is how attackers are adapting to the wider Artificial Intelligence ecosystem. Criminal operators are learning that webpages and Reddit accounts under their control are scraped by major chatbots, and they are adjusting their content to influence chatbot answers directly. Cultural resistance inside the underground is also slowing adoption. Some users view chatbot-assisted hacking as cheating and fear deskilling, while others argue that real gains still depend on existing expertise. Overall, the findings point to two possible futures: a more autonomous and scalable cybercrime model, or a messier expansion of low-skill, Artificial Intelligence-assisted service work described as vibercrime. For now, the research argues against panic. Guardrails do not stop determined attackers, but they still add enough friction to slow the spread of serious harm and leave time for stronger cybersecurity basics.