World Labs, the startup founded by computer vision pioneer Fei-Fei Li, has raised $1 billion from a high-profile group of backers focused on physical Artificial Intelligence, including Nvidia, AMD, Autodesk, Andreessen Horowitz, Fidelity, and Emerson Collective. Autodesk invested $200 million and took a strategic advisory role. Bloomberg had previously reported the round valued World Labs at approximately $5 billion, a figure the company declined to confirm. The company emerged from stealth in September 2024 with $230 million and, in under 18 months, advanced from concept to commercial product to a $5 billion valuation, signaling where major investors believe the next wave of Artificial Intelligence value will be created.

Li argues that current Artificial Intelligence models excel at language but remain blind to physics, unable to navigate a room, reason about gravity, or predict what happens when objects move. World Labs is building “large world models,” systems that perceive, generate, and interact with three-dimensional environments by predicting how objects and spaces behave in 3D rather than simply predicting the next word in a sentence. The company’s first product, Marble, launched in November 2025 and generates persistent, high-fidelity 3D environments from text prompts, images, or video, allowing users to walk through, edit, expand, and export scenes as 3D meshes compatible with engines like Unity, Unreal Engine, Blender, and headsets such as Vision Pro and Quest 3. Early users reported that tasks previously taking weeks could now be completed in minutes, particularly for interior and realistic 3D styles, although illustration-style prompts and exterior spaces remain less reliable.

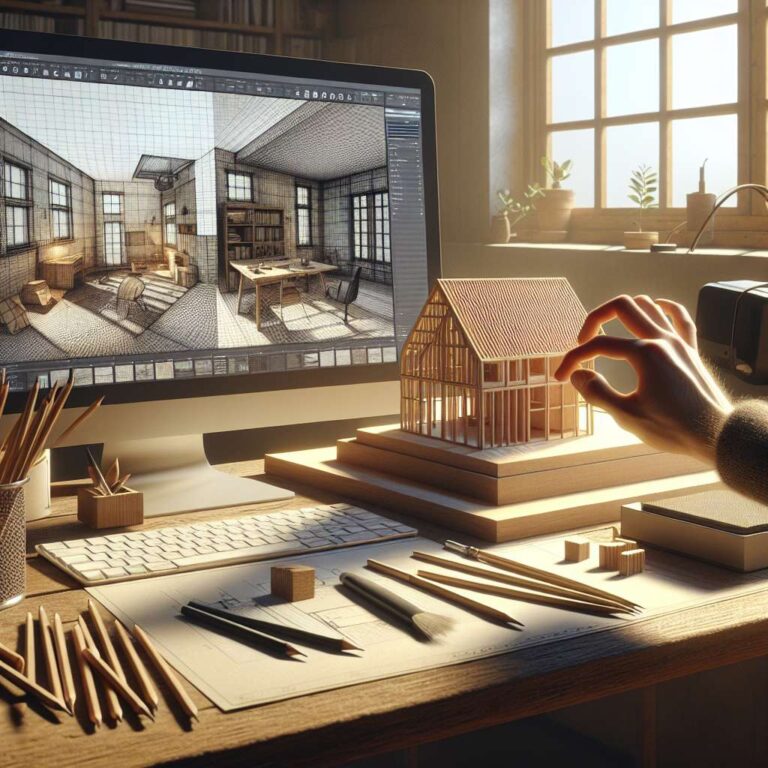

Marble includes a 3D editor called Chisel that separates structure from style, enabling users to sketch rough layouts and then apply visual details. Game developers can generate background environments in minutes instead of weeks, filmmakers can create virtual sets with precise camera control instead of relying on inconsistent Artificial Intelligence video tools, and architects can test designs through simulated 3D walkthroughs before construction begins. Autodesk plans to start the partnership with entertainment use cases and explore integration between Marble’s world models and its design software, alongside its own “neural CAD” generative Artificial Intelligence trained on geometric data. Nvidia’s involvement connects World Labs to the existing infrastructure for physical Artificial Intelligence, including Omniverse for industrial digital twins, Isaac Sim for robotic simulation, and Cosmos, a world foundation model for synthetic training data. World Labs and Nvidia are working on related but distinct layers, with Nvidia providing compute, simulation physics, and the OpenUSD 3D framework, and World Labs supplying generative models that can create entirely new environments for robots to encounter without months of manual modeling.

For sectors such as construction, architecture, product design, gaming, visual effects, and manufacturing, the ability to generate realistic 3D environments from text compresses project timelines that currently stretch weeks or months into minutes, altering project economics and workflows. Across self-driving cars, delivery robots, warehouse automation, and surgical robots, the need to understand 3D space safely creates demand for large volumes of training data, which today is expensive and slow to produce. If world models can generate realistic, physically accurate simulated environments at scale, the bottleneck shifts from data collection to compute, an area where Nvidia’s hardware is already central. World Labs is not publicly traded, but the $1 billion round, Autodesk’s strategic role, and Nvidia’s participation strengthen the view that physical Artificial Intelligence, the capacity for machines to reason about and act in the real world, is emerging as the next major investment cycle after language models, with Nvidia positioned at the center through Omniverse, Isaac Sim, Cosmos, and its GPU platforms.