Stanford’s 2026 Artificial Intelligence Index captures an industry moving quickly while remaining difficult to assess clearly. The report pairs signs of concentration and momentum with evidence of uneven capability. The US is pushing harder on Artificial Intelligence infrastructure than other countries, and it hosts 5,427 data centers (and counting). That’s more than 10 times as many as any other country. The hardware stack also depends heavily on a narrow manufacturing base, with TSMC fabricating almost every leading Artificial Intelligence chip and making the global Artificial Intelligence hardware supply chain dependent on one foundry in Taiwan.

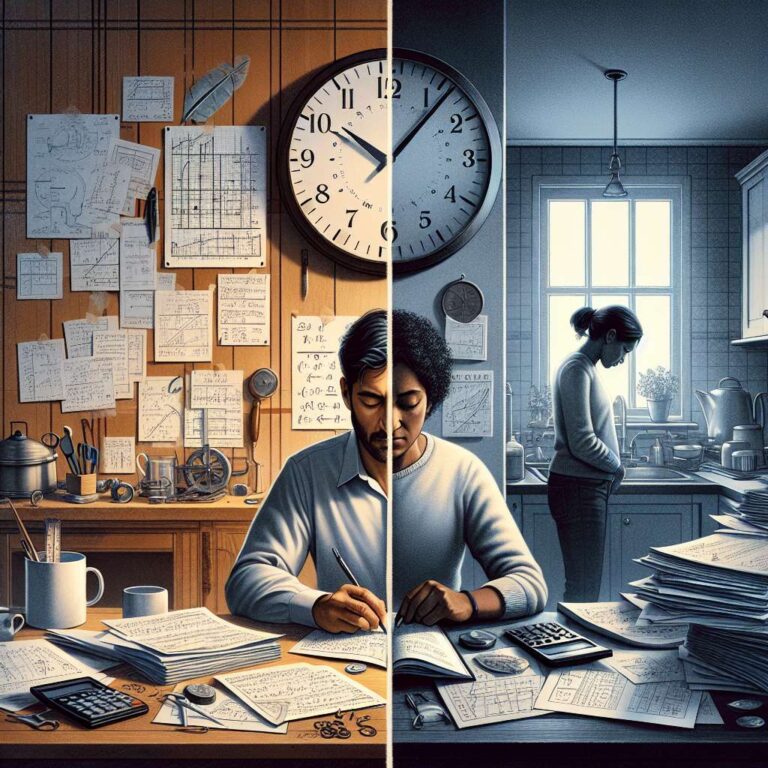

The strongest theme is contradiction. Systems can post elite results in some narrow domains while failing at tasks that appear much simpler. The Stanford report notes that Google DeepMind’s top reasoning model, Gemini Deep Think, scored a gold medal in the International Math Olympiad but is unable to read analog clocks half the time. That disconnect helps explain why opinion on Artificial Intelligence feels so unstable, with the technology described at once as transformative, overhyped, threatening to jobs, and still prone to obvious mistakes.

A major divide runs between experts and the public. “AI experts and the general public view the technology’s trajectory very differently,” the authors of the AI Index write. “Assessing AI’s impact on jobs, 73% of U.S. experts are positive, compared with only 23% of the public, a 50 percentage point gap. Similar divides emerge with respect to the economy and medical care.” The report defines experts as US-based researchers who took part in Artificial Intelligence conferences in 2023 and 2024.

One reason for that gap may be that different groups encounter very different versions of the technology. People using the newest tools for coding, math, or research are seeing systems at their most capable, especially as model makers focus on technical tasks with clear right or wrong answers. Those products are also becoming commercially valuable, which drives further improvement. Outside those use cases, large language models remain unreliable and inconsistent, a pattern often described as the “jagged frontier.”

The result is that two people can talk about Artificial Intelligence while effectively referring to different experiences. A power user paying for top-tier tools and tracking the latest model releases may see dramatic progress, while someone relying on a free version for a more open-ended task may see little reason for confidence. Artificial Intelligence is advancing faster and performing better than many people realize, but it also remains weak in many areas that matter to everyday users.