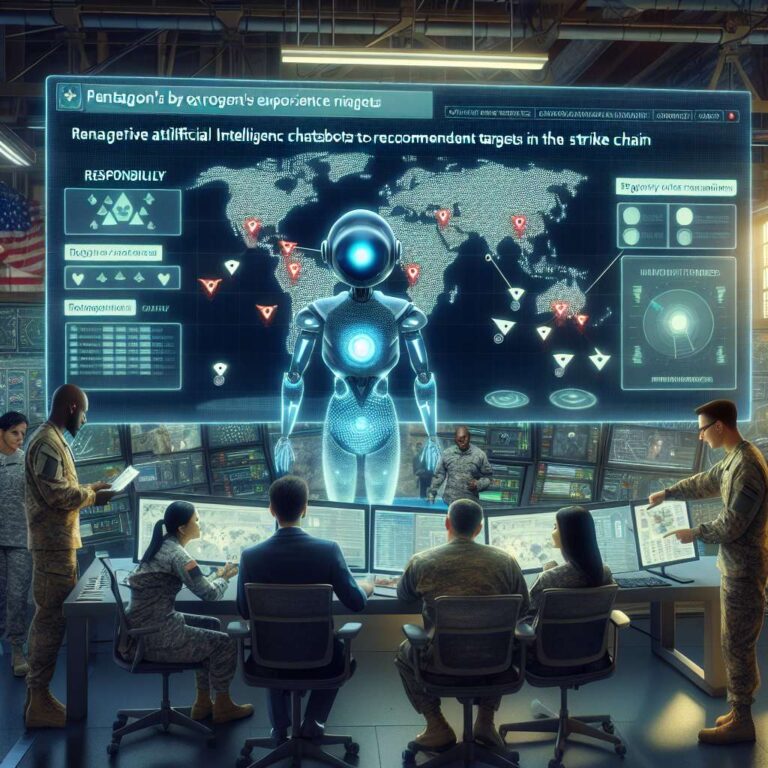

The US military is exploring the use of generative artificial intelligence systems as a new layer in its targeting process, with chatbots asked to rank lists of potential targets and recommend which to strike first. A Defense Department official described a scenario in which a list of possible targets is fed into a generative artificial intelligence system being deployed for classified environments, after which humans could ask the model to analyze the data and prioritize targets while considering variables such as aircraft positioning. Human operators would then remain responsible for checking and evaluating the system’s recommendations, and the official framed the description as an example of how such tools might be used rather than a confirmation of current practice.

The emerging chatbot capability builds on Project Maven, a long-running Pentagon program that uses older artificial intelligence techniques such as computer vision to process massive volumes of sensor data and imagery. Maven can take thousands of hours of aerial drone footage and algorithmically identify potential targets, which soldiers then select and vet through a map-based dashboard that visually distinguishes targets from friendly forces. The new generative artificial intelligence layer is intended to sit on top of systems like Maven as a conversational interface that can help users search and analyze data more quickly, but it introduces different risks because large language models are less battle tested and their outputs are easier to obtain yet harder to verify than the map-driven interface that forces direct inspection of underlying data.

The push to integrate generative artificial intelligence comes as military artificial intelligence use faces growing public scrutiny after a strike on a girls’ school in Iran in which more than 100 children died, an incident that multiple outlets have linked to a US missile and that the Pentagon says remains under investigation. Reports have indicated that Anthropic’s Claude has been integrated into existing military artificial intelligence systems and used in operations in Iran and Venezuela, and a preliminary inquiry into the school strike has pointed to outdated targeting data as a contributing factor, while leaving unclear what role, if any, generative artificial intelligence systems played. The Defense Department has expanded nonclassified generative artificial intelligence access through the GenAI.mil program for tasks such as contract analysis and presentation drafting, while only a small set of models is cleared for classified use. Anthropic’s Claude was the first approved but is now at the center of a legal fight after the Pentagon labeled the company a supply chain risk and President Trump demanded that the government stop using its artificial intelligence products within six months. OpenAI and Elon Musk’s xAI have since reached agreements allowing their models, including ChatGPT and Grok, to be used in classified settings, with OpenAI stating that its deal includes certain limitations whose practical effectiveness remains uncertain.