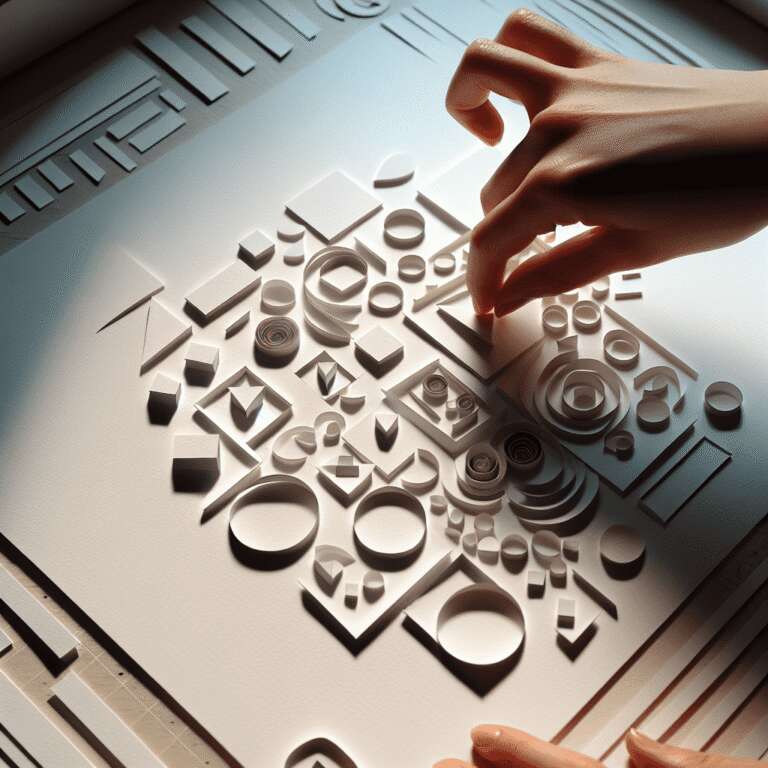

Recent advances in Artificial Intelligence-powered image generation have delivered remarkable progress, evolving from early, error-prone outputs to today’s photorealistic visuals. Despite these improvements, users still face limitations in exercising fine-grained creative control over their generated images. While text-based scene creation has been simplified and model alignment to prompts has enhanced, specifying intricate composition details, camera angles, and precise object placements through text remains a challenge. Adjusting those elements in real time adds another layer of complexity for creators.

To address these hurdles, workflows leveraging ControlNets have emerged, offering enhanced fine-tuning over output elements. However, the generally high level of technical complexity within these setups creates accessibility barriers for a broader user base. To simplify advanced Artificial Intelligence art workflows for general creators, NVIDIA announced its AI Blueprint for 3D-guided generative Artificial Intelligence for RTX PCs during CES earlier this year. This Blueprint encapsulates everything needed to implement 3D-guided, compositionally controlled image generation.

The NVIDIA AI Blueprint, now available for download, serves as a comprehensive sample workflow enabling users to harness the full potential of controlled generative image creation without steep learning curves. By streamlining advanced tools and techniques, NVIDIA aims to democratize access to next-generation Artificial Intelligence creative capabilities, particularly among those leveraging RTX-enabled hardware. This move is poised to fast-track creative expression in digital artistry, design, and content production through state-of-the-art, user-friendly solutions.