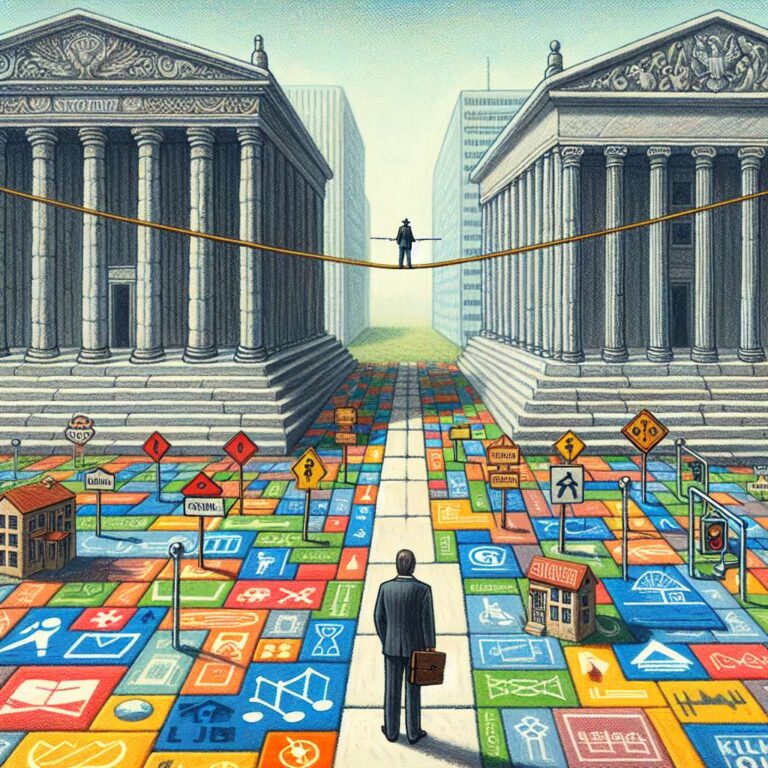

Wayne Cleghorn, a technology, data and artificial intelligence partner at Excello, questions whether the United Kingdom’s current pro-innovation regulatory framework for artificial intelligence is fit for purpose, particularly when compared with the European Union’s more prescriptive model. In an interview with Computer Weekly, he notes that the United Kingdom’s strategy depends on multiple regulators working together coherently, even though the country has no known and proven model for coordinating oversight of rapidly evolving technologies. He warns that this reliance on disparate regulators is effectively an experiment in an uncontrolled environment that may struggle to keep pace with the risks and complexities of modern artificial intelligence systems.

Cleghorn highlights the risk that the United Kingdom could become a regulatory rule taker rather than a rule maker, because “artificial intelligence developed in the US, EU and China will already embed those jurisdictions’ laws and standards”. He argues that some uses of artificial intelligence, such as automated decisions determining liberty or critical healthcare outcomes, require a statutory definition of what is unacceptable, rather than being left to voluntary principles or individual sector regulators. Leaving sensitive questions of liberty, healthcare, education, finance, law enforcement and the courts to market participants to “set and mark their own homework” is presented as a direct threat to long term public trust and engagement, especially where harms may only become visible over time.

In his additional commentary, Cleghorn calls for the United Kingdom to engage in real time with international discussions and to consider targeted mandatory obligations for artificial intelligence developers, including at least setting out in law what conduct, practices and uses of artificial intelligence are unlawful. He points to work already being done by international organisations, other governments, civil society, researchers and academics as a rich source of criteria for such rules. Drawing a parallel with the early hands off approach to social media, which he says did not work, he notes how entrenched platforms have made after the fact regulation around issues like online child safety, disinformation and democratic participation far harder. Cleghorn also observes that it is still too early to know whether the EU artificial intelligence act is over regulating low risk systems because its mechanisms are not yet fully operational across the 27 EU member states, and he describes that regulation as an experiment that needs more time to assess fully. He concludes that the United Kingdom cannot credibly claim international leadership in artificial intelligence safety and governance without a national omnibus artificial intelligence law enforced by courts and tribunals, setting contractual standards and shaping commercial practice.