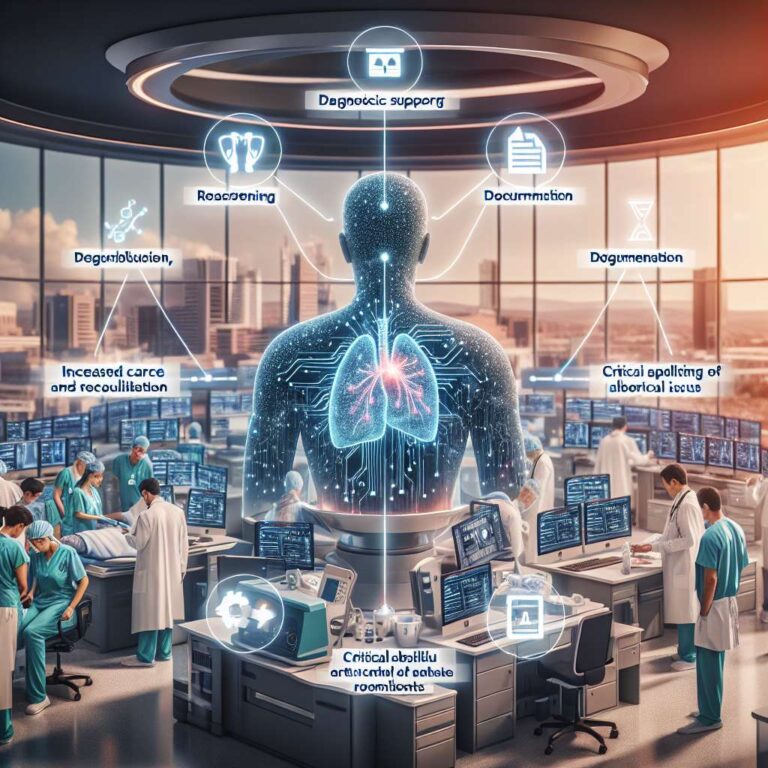

The article describes how the rapid evolution of generative artificial intelligence is poised to transform both medicine and medical education, with a particular focus on clinical practice in obstetrics and gynecology. The authors explain that large language models have begun to demonstrate capabilities in reasoning, diagnosis, documentation, and patient communication, and they emphasize that these abilities can rival or exceed those of clinicians in specific tasks. They position these tools as part of a broader shift toward technology-enabled care that could significantly alter how clinicians gather information, make decisions, and interact with patients.

In the context of training, the article argues that artificial intelligence is reshaping how students learn and how faculty teach by offering individualized, context-sensitive guidance at scale. The authors highlight that these systems can support learners with tailored explanations, real-time feedback, and simulated clinical scenarios, which can expand access to high-quality educational experiences. They also suggest that integrating artificial intelligence into curricula will require rethinking assessment, supervision, and the development of new competencies so that future clinicians can critically appraise and safely use these technologies.

The article outlines the current state of artificial intelligence integration in health care and examines how health systems can responsibly implement these tools to enhance patient care and education. The authors raise critical questions about ethics and safety as the field seeks to harness this transformative potential, including issues of regulation, oversight, and the need to preserve human judgment and patient-centered care. They conclude that while generative artificial intelligence and large language models offer powerful opportunities for innovation, realizing their benefits will depend on deliberate design choices, rigorous evaluation, and clear attention to equity, transparency, and professional responsibility.