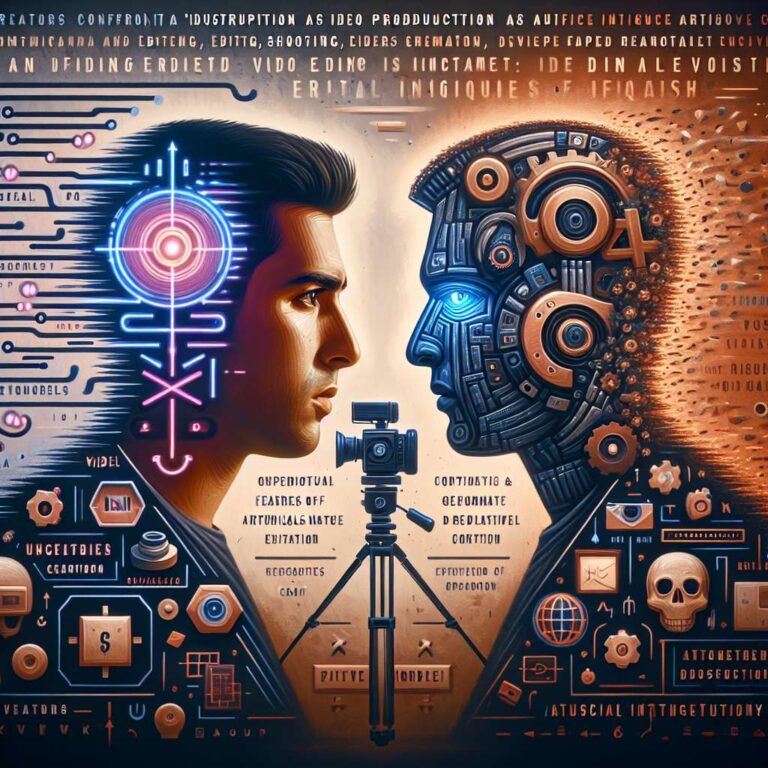

As Sora 2 sweeps social media feeds with striking, realistic video clips, a new question looms over the creator economy: what happens to influencers when anyone can conjure high-quality footage in seconds? For many, the rise of artificial intelligence is both opportunity and threat. Automation could help creators communicate faster and at larger scale, yet it also flattens hard-won advantages in shooting and editing. With barriers to production falling, the field may soon be crowded with slick content made by anyone with the right prompt, not just those who spent years mastering the craft.

A growing grassroots push is warning about the costs of this shift. Toronto artist Sam Yang has used his channels to argue that artists are “fed up” because their copyrighted work is being used to train artificial intelligence models without consent, exposing them to reputation damage, forgery and fraud. Model and activist Sinead Bovell, who has built sizable followings on Instagram and TikTok, has raised similar concerns in fashion and modeling circles, cautioning that audiences could normalize synthetic images and forget to ask whether real human models are being compensated for the countless hours that honed the skills now powering the very engines competing for their livelihoods.

Those fears gained credence with an Atlantic investigation that found artificial intelligence had been trained on at least a million how-to videos from popular influencers, spanning woodworking to beauty. The implication is stark: models built on those tutorials could help anyone replicate the look and know-how of established creators, potentially siphoning audience growth and income from the people who produced the original knowledge. To many influencers, this feels like a replay of earlier battles over unlicensed scraping and monetization, only now extended to the full audiovisual playbook.

Others see upside. Proponents argue that when video creation becomes push-button, the differentiators will be personality, relatability and style, which are precisely where human influencers excel. They also note that virtual influencers such as Aitana López from Barcelona’s The Clueless and Lil Miquela from Vancouver-based Dapper Labs have long coexisted with human creators, with their personas still guided by people. Artificial intelligence will likely increase the “slop factor” and intensify competition, but it could also push human influencers to lean further into the unique attributes only they can deliver.