The International Energy Agency (IEA) has released a report suggesting that Artificial Intelligence could one day reduce greenhouse-gas emissions beyond the increase caused by the energy-intensive development of data centers. This projection mirrors optimistic claims by some in the Artificial Intelligence sector, such as OpenAI´s CEO Sam Altman, who predicted Artificial Intelligence would eventually help solve climate challenges.

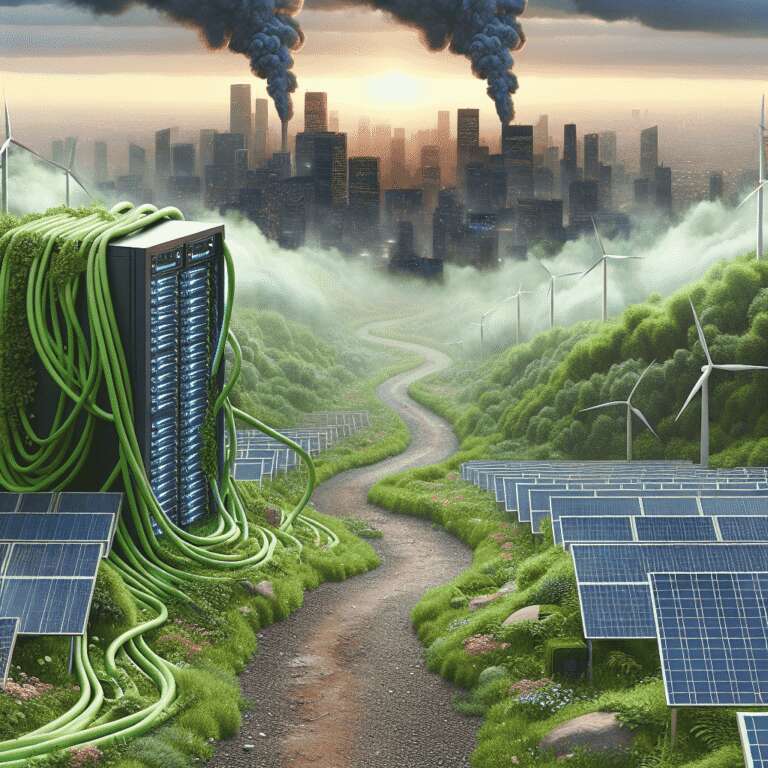

While there are potential ways AI might decrease emissions, currently, the industry´s expansion is increasing energy consumption and contributing to emissions, particularly in regions concentrated with data centers. These facilities often rely on fossil fuels, spurring energy developers to propose more gas plants to meet demand. Despite cleaner energy options like geothermal, nuclear, and renewable sources, such alternatives often incur higher costs and longer development times.

The notion that Artificial Intelligence´s future benefits justify present emissions is reminiscent of carbon credit schemes, where emissions are offset by funding theoretical environmental benefits. However, such programs have often failed to deliver promised results. Similarly, the climate gains from Artificial Intelligence are speculative and dependent on future technological and policy developments that are yet uncertain. Meanwhile, some companies are taking steps to integrate renewable energy into their operations, a necessity given current emission levels and climate change risks.