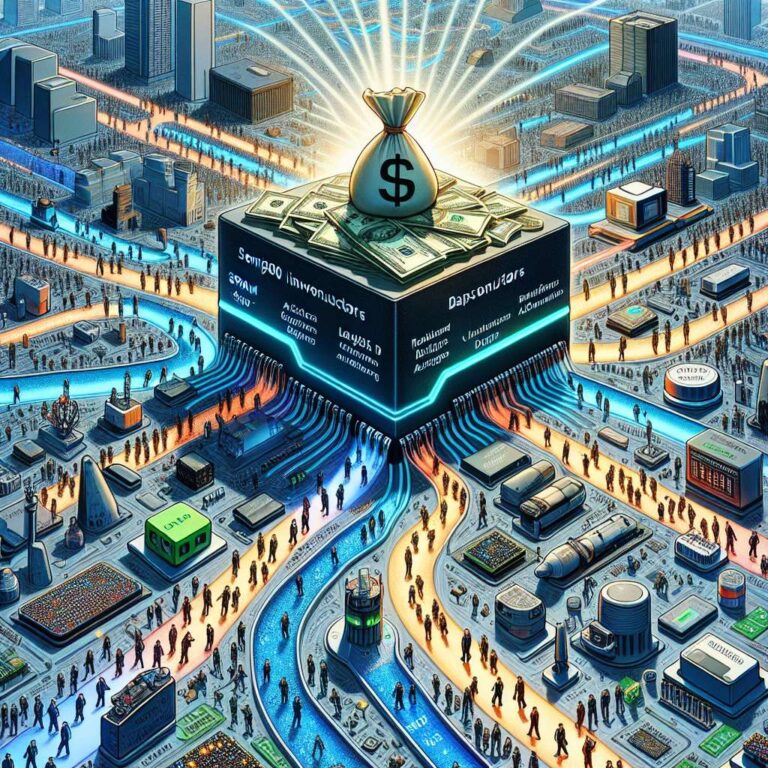

Artificial Intelligence chip startups attracted a surge of venture funding on Tuesday, collectively securing more than 1.1B as investors continue to back challengers to Nvidia despite concerns about an Artificial Intelligence bubble. MatX, founded in 2022 by former Google engineers Reiner Pope and Mike Gunter, took the largest share with 500 million in a series B round led by Jane Street and Situational Awareness LP. The company plans to launch its first product, an LLM-optimized accelerator called MatX One, later this year and is positioning the chip to handle the full spectrum of large language model workloads, including pre-training, reinforcement learning and inference prefill and decode.

MatX is pursuing an architecture that leans heavily on SRAM to store model weights, arguing it offers far higher speed than the HBM used by AMD or Nvidia, even though SRAM is far less space efficient and modern dies can hold only a few hundred megabytes while still leaving area for compute. The company claims its split systolic array design will deliver the highest “FLOPS per mm2” and scale to “hundreds of thousands of chips,” and MatX expects its first chip will be able to deliver more than 2,000 tokens a second for a large 100-layer mixture of expert models. To overcome SRAM’s density limits, MatX appears to be adopting a scale-out strategy similar to Cerebras and Groq, while also integrating HBM to store key-value caches that track model state across sessions, in an effort to combine gpu-class throughput with the latency benefits of SRAM-centric designs.

In Europe, Dutch startup Axelera raised 250M in a round led by Innovation Industries to advance low-power RISC-V based Artificial Intelligence accelerators targeting power-constrained edge applications such as computer vision and robotics, with a roadmap that scales the architecture from the edge to the datacenter. Axelera’s latest chip, Europa, boasts up to 629 TOPS of INT8, fed by 64GB of DRAM good for 200 GB/s of bandwidth, which in terms of compute puts it on par with an Nvidia A100 while using less than a sixth the power at 45 watts, although it still trails that nearly six-year-old gpu in memory capacity at 80GB of HBM2E and bandwidth 2 TB/s. SambaNova also secured a 350 million investment from Vista Equity, Cambium Capital and Intel’s investment arm to commercialize its next-generation dataflow accelerators, alongside a multi-year collaboration that will see Intel Xeon processors integrated into SambaNova’s Artificial Intelligence servers, and the company disclosed a new Artificial Intelligence accelerator, the SN50, which SoftBank plans to deploy in its Japanese datacenters starting later this year.