The ARC Prize Foundation analyzed 160 replays and reasoning traces from OpenAI’s GPT-5.5 and Anthropic’s Opus 4.7 on the ARC-AGI-3 benchmark, an interactive test released in late March 2026. The benchmark requires Artificial Intelligence agents to explore turn-based game environments, form hypotheses, and execute action plans without instructions. Every frontier model tested so far has scored below 1 percent, while humans solved the same tasks with no prior knowledge. GPT-5.5 hits 0.43 percent at a cost of around ?,000, while Opus 4.7 manages just 0.18 percent.

The most common failure pattern is that models notice local effects but fail to assemble them into a coherent world model. In one example, Opus 4.7 recognized by step 4 that ACTION3 rotates a container and by step 6 that ACTION5 pours paint, but it never combined those observations into the correct strategy. A similar breakdown appeared in cn04, where Opus found the right rotate-then-place interaction at step 23 but then optimized for the wrong target and began tracking a nonexistent progress bar. The result is detailed perception without a workable understanding of the broader game mechanics.

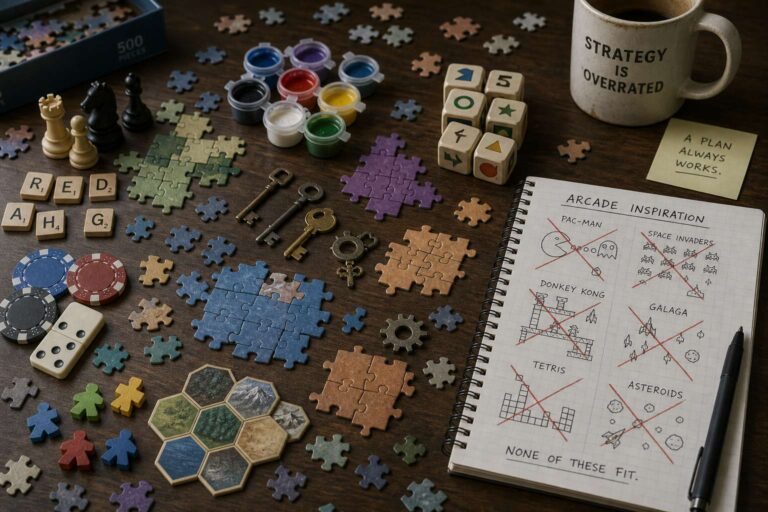

A second failure pattern comes from false analogies to familiar games in training data. Across multiple runs, the models misread unknown environments as Tetris, Frogger, Sokoban, Breakout, Pong, or Boulder Dash, then pursued the wrong mechanics. GPT-5.5 interpreted the ls20 environment as Breakout even though the task centered on key combinations. The third pattern appears when a model solves a level without understanding why the strategy worked. In ka59, Opus solved level 1 in 37 actions based on a false teleportation theory, then carried that misconception into level 2. In ar25, it found the right mirrored-motion insight but later buried it under hallucinated rules.

The comparison between the two systems suggests different but related weaknesses. Opus 4.7 often identifies mechanics early, but it tends to lock into confident and incorrect theories. GPT-5.5 generates a broader set of hypotheses, making it more likely to touch on the right idea, but it struggles to commit to a plan and follow through. ARC Prize Foundation argues that these failures matter beyond benchmarks because the same demands appear in unfamiliar websites, internal tools, and undocumented APIs. Each of the 135 environments was solved by at least two humans without any special training, reinforcing the gap between benchmark scores and generalizable reasoning.