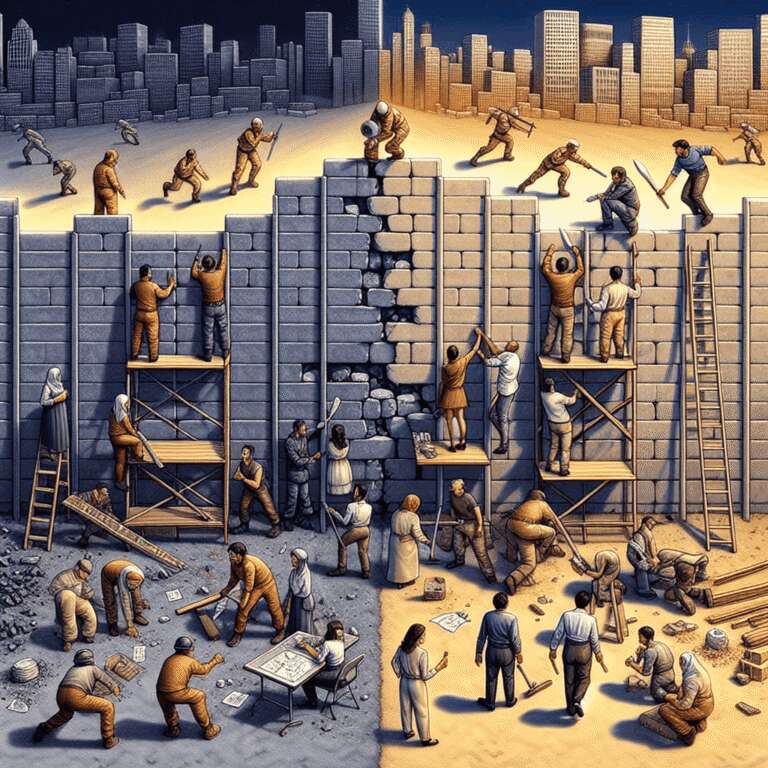

Publicly disclosing software vulnerabilities has long required a balance—vendors must inform customers about security flaws and their severity, but these same details offer attackers a starting point for crafting exploits. While threat actors and defenders have engaged in a constant race to exploit or patch vulnerabilities, effective patching routines and threat detection have historically given organizations a fighting chance to respond before malicious actors succeed.

Recent advancements in Artificial Intelligence are transforming this landscape. The rise of open, resource-efficient, and locally runnable generative models like DeepSeek has made sophisticated language models more widely accessible. Unlike commercial cloud-based models that include safety guardrails, these open models can be downloaded and customized for malicious purposes. By incorporating data from malware research and underground forums, threat actors can finetune these models into specialized platforms—sometimes offered as subscription services—that drastically accelerate the automation and creation of malware and exploits based on newly disclosed vulnerabilities.

Evidence of these risks is already present. Since 2023, models such as FraudGPT and WolfGPT have provided capabilities for generating malicious payloads. In April 2024, researchers showed that an Artificial Intelligence agent powered by GPT-4 could autonomously exploit recently disclosed vulnerabilities. This shift means the traditional 24-48 hour patching window is collapsing, with adversaries now able to potentially develop and launch exploits within minutes of disclosure. Although defenders cannot match this speed manually, deploying agentic Artificial Intelligence to automate vulnerability response offers a potential countermeasure. Ultimately, these developments are changing the focus in cybersecurity from sophistication to speed and volume, making it imperative for defenders to adopt similar automation. While the threat landscape becomes faster and more volatile, the evolution of both attacker and defender tools ensures that Artificial Intelligence will remain at the heart of this new cybersecurity arms race.