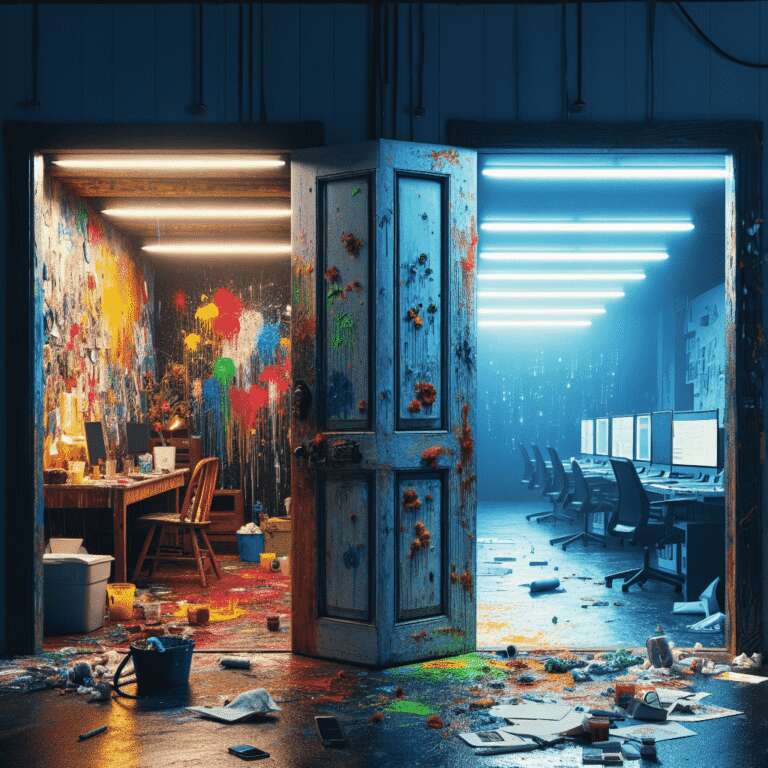

Despite the explosive growth of Artificial Intelligence since the launch of ChatGPT in late 2022, not everyone is embracing it. Sabine Zetteler, who heads a communications agency in London, remains unconvinced by the merits of Artificial Intelligence. She emphasizes the importance of genuine human creation, questioning the value of content or music generated by algorithms, and expressing concern for the social costs, such as job losses in her own business. Zetteler rejects the idea of using technology to increase profit at the expense of joy, creativity, and societal contribution, arguing that the personal and communal rewards of human-driven work are irreplaceable.

Environmental impact is another key reason for resisting Artificial Intelligence, as highlighted by Florence Achery, a London-based yoga retreat owner. Achery describes the technology as ´soulless´ and fundamentally at odds with her business´s focus on human connection. She also cites reports that Artificial Intelligence systems require dramatically more energy than traditional search engines—one ChatGPT query can use up to ten times more electricity than a Google search, according to a Goldman Sachs estimate. Achery stresses that most users are unaware of this environmental footprint, and finds it difficult to reconcile tech-driven efficiency with broader costs to society and the planet.

Concerns extend beyond social and environmental realms. Sierra Hansen, a public affairs professional in Seattle, points to the risks Artificial Intelligence poses to human critical thinking and problem-solving skills. She argues that delegating everyday planning or creative tasks, such as making a music playlist, to a chatbot erodes essential mental faculties. Conversely, some reluctant users like ´Jackie Adams´ in digital marketing have ultimately adopted Artificial Intelligence due to workplace pressures and the risk of being left behind, even if their original objections centered on environmental and ethical grounds. According to James Brusseau, a professor of Artificial Intelligence ethics, society has reached a point where opting out is increasingly difficult—Artificial Intelligence is set to take over more routine or technical roles, with the key distinction that human involvement will remain crucial where accountability or nuanced judgment is essential. Ultimately, even those resistant to Artificial Intelligence now find themselves caught in its growing presence across digital platforms and professional life.