Supermicro has announced that it will support the upcoming nvidia vera rubin and rubin platforms with an expanded focus on manufacturing capacity and advanced liquid cooling. The company is working in collaboration with nvidia to target first to market delivery of data center scale systems optimized for the nvidia vera rubin and rubin platforms. Supermicro positions this move as part of its strategy to address the rapid growth in artificial intelligence, cloud, storage, and 5g or edge workloads with tightly integrated infrastructure offerings.

According to the announcement, Supermicro will leverage its accelerated development cycles and long running collaboration with nvidia to rapidly deploy flagship nvidia vera rubin nvl72 and nvidia hgx rubin nvl8 systems. The company highlights its data center building block solutions, or dcbbs, approach as a core differentiator, stating that this modular design methodology enables streamlined production, broad customization options, and faster time to deployment for customers building next generation artificial intelligence infrastructure. The focus on flexibility is intended to serve both hyperscale data centers and large enterprises that require tailored configurations.

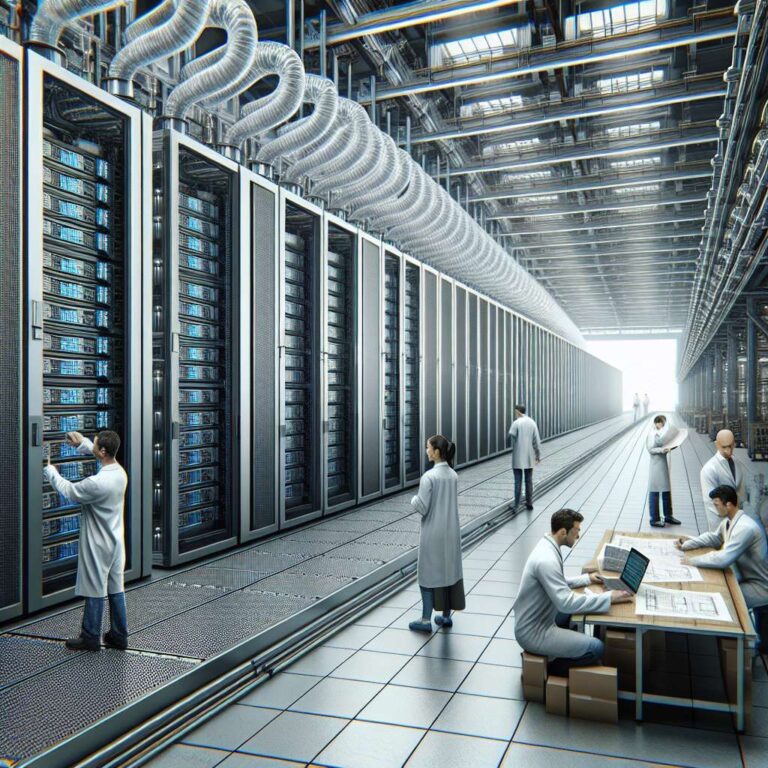

Supermicro president and chief executive officer Charles Liang emphasized that the vendor’s agile building block strategy and extended partnership with nvidia allow it to introduce advanced artificial intelligence platforms to market more quickly than competitors. He stated that expanded manufacturing capacity combined with what the company describes as industry leading liquid cooling expertise is meant to help hyperscalers and enterprises roll out nvidia vera rubin and rubin platforms infrastructure at scale with improved speed, efficiency, and reliability. The announcement frames these capabilities as a way for customers to gain a competitive edge as they deploy new artificial intelligence driven services.