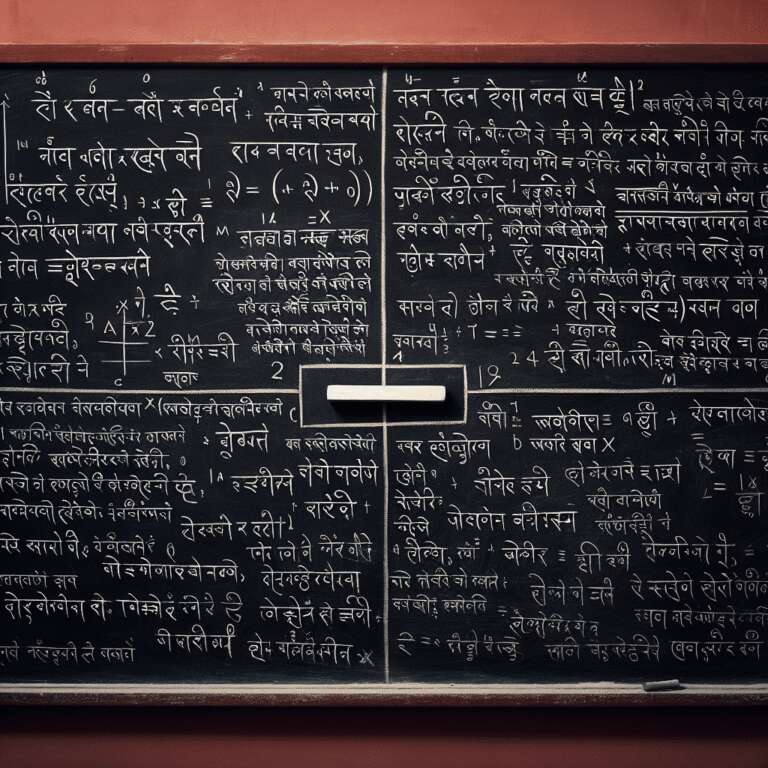

Bengaluru-based Sarvam AI, a start-up selected under the Indian government´s IndiaAI Mission, has introduced its flagship Large Language Model (LLM) named Sarvam-M. The new model is a 24-billion-parameter, multilingual, hybrid-reasoning, text-only solution built upon Mistral Small—an open-weight model from French company Mistral AI. Sarvam-M is designed for a range of applications including conversational agents, translation tools, and educational services, reflecting its broad ambitions to support India’s unique linguistic and technological landscape.

Sarvam-M sets new performance benchmarks in Indian languages, mathematical reasoning, and programming competence. The company reports that the model delivers a 20% average improvement over its base on Indian language tasks, a 21.6% enhancement in math problems, and a 17.6% uptick on coding benchmarks. Particularly striking is its +86% improvement on romanized Indian language GSM-8K math benchmarks, underscoring its strength in combining linguistic and logical reasoning relevant to local contexts. This release follows the firm’s earlier rollout of Bulbul, a speech model supporting 11 Indian languages and authentic accents.

In comparative evaluations, Sarvam-M is said to outperform Meta’s LLaMA-4 Scout and is competitive with larger models such as LLaMA-3.3 70B and Google’s Gemma 3 27B, even though those models are trained on significantly more data. While Sarvam-M exhibits slightly diminished scores (about 1% below baseline) on English knowledge benchmarks, it is made available as open source on Hugging Face, with API access for developers, promoting adaptability and research. Its architecture uniquely supports two operating modes: ´think´ mode for tasks requiring complex logic and computation, and ´non-think´ mode for efficient, general-purpose text generation and conversation. Importantly, Sarvam-M is post-trained on Indian languages alongside English, ensuring robust Indic cultural representation, and supports both native Indic scripts and romanized forms for diverse user needs.