OpenAI has re-entered the open-model arena by releasing its first open-weight large language models since the notable GPT-2 rollout in 2019. These models, diverging from OpenAI´s usual web-accessed formats, are freely downloadable, modifiable, and runnable on local devices such as laptops. This new approach empowers developers and hobbyists to experiment, adapt, and deploy these models without the constraints of closed ecosystems.

The timing is pivotal, with Meta previously dominating the American open-source Artificial Intelligence scene through its Llama models and Chinese open-weight models gaining traction. However, Meta appears to be shifting towards more closed releases. OpenAI´s open-weight release signals renewed competition among American players and offers fresh opportunities for the broader Artificial Intelligence community to innovate. This move also comes as growth in Chinese open models challenges the US-centric landscape, making OpenAI´s decision both bold and strategic.

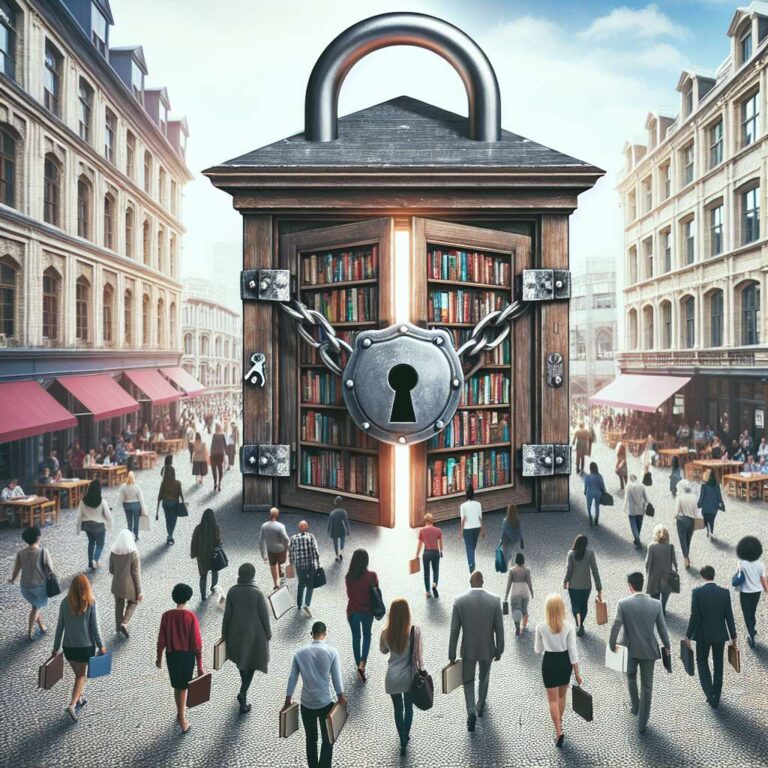

Meanwhile, the rise of generative Artificial Intelligence is fundamentally transforming internet search. Traditional keyword-search approaches are being replaced by conversational interfaces, providing answers synthesized by large language models from current web data instead of merely returning links. This transformation has publishers concerned about changing web traffic patterns, while broader questions arise around trust, information provenance, and the social impact of machine-generated content on shared realities. The ripple effects span well beyond search, influencing internet business models, competition, and the information ecosystem as a whole.