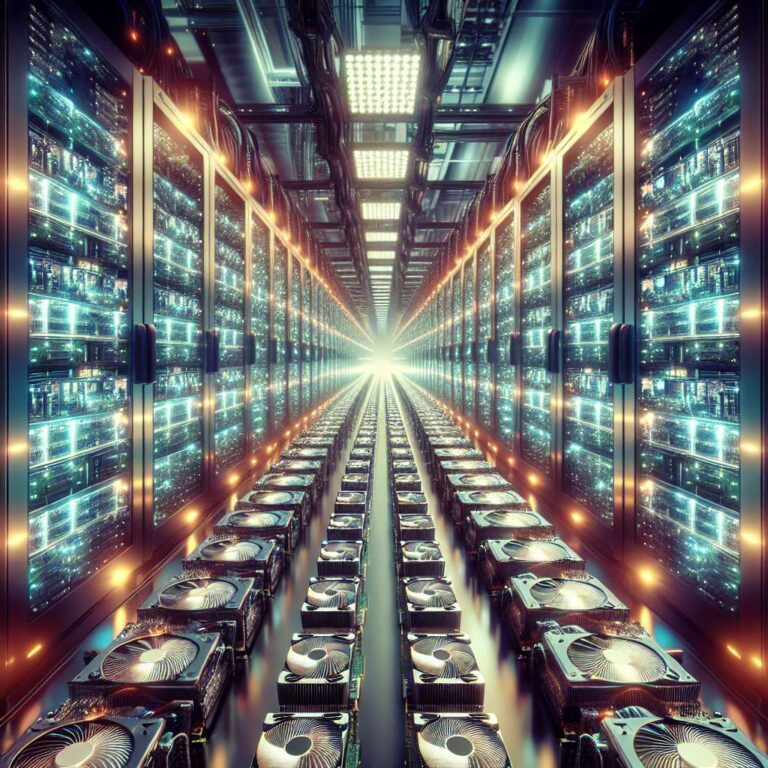

Nvidia and Meta are moving to significantly expand their deployment of graphics processing units to run artificial intelligence workloads by ordering millions of additional chips. The strategy underscores how large technology companies are prioritizing accelerated computing over traditional CPU-centric architectures to handle rapidly growing artificial intelligence models and services. As graphics processors become the core of new data center builds, the balance of power in the server component market is starting to shift.

The expansion plan highlights the scale at which hyperscale and consumer internet companies are now investing in dedicated artificial intelligence infrastructure. By committing to millions of additional graphics chips, Nvidia and Meta are signaling long term expectations for continued growth in artificial intelligence training and inference across products, advertising systems, and recommendation engines. These large, multi year chip orders also help secure supply in a constrained high performance semiconductor market.

The pivot toward graphics processor based systems could prove problematic for Intel and AMD, which have dominated the CPU server space for decades. If new deployments increasingly favor configurations centered on accelerators instead of general purpose processors, traditional CPU vendors risk losing share in the most valuable segments of the data center market. The trend illustrates how artificial intelligence is not only transforming software and services but also reshaping the underlying hardware economics of cloud and enterprise computing.