Researchers affiliated with a leading Silicon Valley technology company have introduced a new framework for making enterprise workflows more readable and actionable for large language models in customer support environments. Presented at the 31st International Conference on Computational Linguistics (COLING 2025) in a study titled “LLM-Friendly Knowledge Representation for Customer Support,” the work focuses on restructuring complex operational processes so that automated support systems can interpret them more effectively. The framework is designed to improve scalability, interpretability, and decision quality across intricate operational settings by translating business logic into machine-optimized formats.

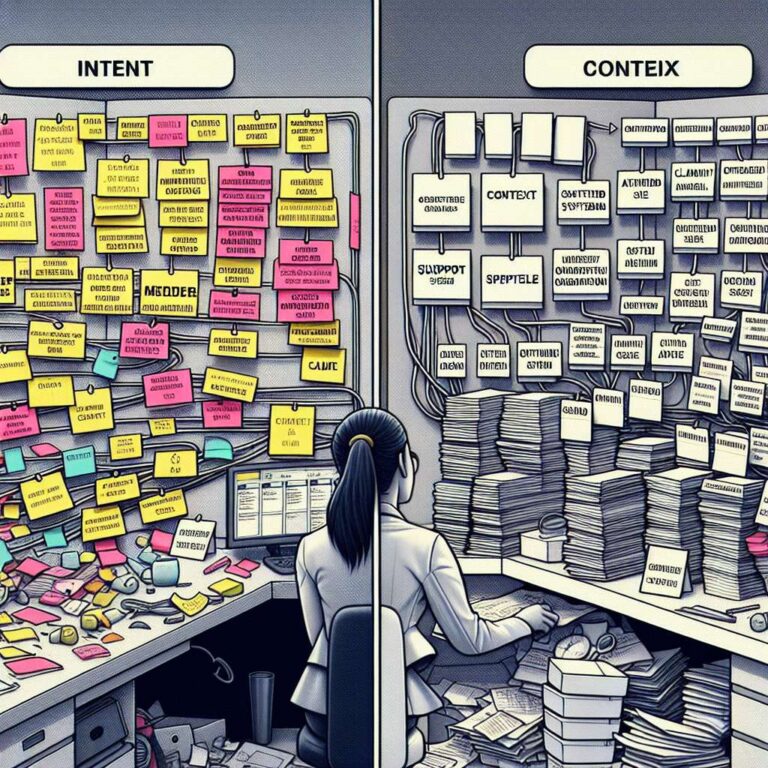

The central innovation in the study is the Intent, Context, and Action format, a pseudocode-style representation that recasts operational workflows into a structure optimized for large language model comprehension. Experiments reported in the paper show that ICA improves model interpretability and enables more accurate action predictions, achieving up to a 25 percent accuracy gain and a 13 percent reduction in manual processing time. The authors report that the ICA methodology establishes a new benchmark for customer support applications and can be extended to other knowledge-intensive sectors such as legal and finance. The study concludes that by reformulating operational knowledge into ICA, organizations can embed structured reasoning in automated systems while maintaining clearer transparency over how decisions are made.

To address the shortage of suitable training data, the researchers also propose a synthetic data generation pipeline that minimizes the need for manual labeling. The method constructs training instances by simulating user queries, contextual conditions, and decision-tree structures so that models can learn reasoning patterns that reflect real-world support workflows. According to the experiments, this approach reduces training costs and allows smaller open-source models to approach the performance and latency of larger systems, which the authors describe as a meaningful step toward scalable enterprise artificial intelligence development. Among the contributors is Hanchen Su, a staff machine learning engineer with experience in machine learning, natural language processing, and statistical learning, whose background spans intelligent customer service, pricing strategy, recommendation systems, and large-scale data processing across multiple technology companies and entrepreneurial projects.