Generative Artificial Intelligence is rapidly driving media toward what the author calls an environment of infinite, fluid, personalized, and synthetic content, and this shift is expected to transform culture and the social fabric over the coming decade and beyond. Enthusiasm about new creative possibilities coexists with deep wariness about the costs, particularly as debates over Artificial Intelligence increasingly resemble polarized arguments over politics or religion. The vision is not framed in absolute utopian or doomer terms, but as a complex, path-dependent transition in which incentives, regulations, consumer preferences, and social norms shape outcomes, and in which social systems can eventually self-correct, although often only after prolonged periods of dysfunction.

The concept of “fluid” content rests on high dimensional multimodal latent spaces that allow any story or asset to be expressed across formats, platforms, and modalities. A single narrative could fluidly become a book, a movie, a serialized vertical video, a game, an album, a podcast, or be re-cut and formatted for YouTube, TikTok, Twitter, LinkedIn, and Instagram, with plot elements, structure, characters, style, tone, modality, format, state, and provenance all becoming malleable. When content can be produced in real time and becomes contextual, emergent, and interactive, provenance becomes fluid as lines blur between creator and consumer, and state becomes fluid as lines blur between finished and unfinished work. Personalization then follows from this fluidity, as people or their agents can tailor any work to their context, need state, preferences, or constraints such as “I only have 12 minutes,” even though many will still often opt for canonical versions.

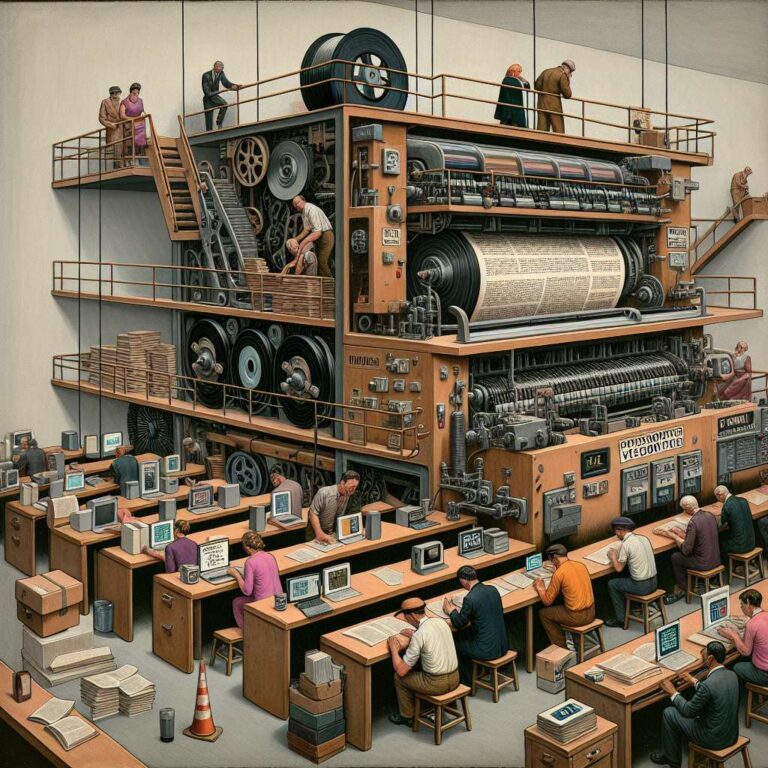

The “synthetic” dimension denotes a continuum from productions that are “entirely” human, albeit often assisted by Artificial Intelligence in ideation or editing, through hybrid approaches with human oversight of Artificial Intelligence-driven decisions, to fully synthetic works generated at the speed of computation. As generative Artificial Intelligence capabilities improve, more synthetic elements are likely to be incorporated into all forms of production, and the share of wholly synthetic content is expected to rise, yielding an effectively infinite supply of options. Media theory provides a lens for understanding why this matters: thinkers such as Harold Innis, Marshall McLuhan, Walter Ong, and Neil Postman argued that communications technologies have structural biases that shape cognition, public discourse, and what societies optimize. Print culture supported linear, analytic reasoning, while television incentivized entertainment and immediacy, and social media has been linked to polarization, degraded discourse, and mental health concerns, despite benefits like connection and civic engagement. By analogy, generative Artificial Intelligence in media could bring real gains in representation, creative risk-taking, and democratized expression, while also magnifying isolation, fragmenting shared stories, undermining agreement on truth, and overloading people with information, creating an environment that is likely to be messy and fraught but not predetermined to end in catastrophe.