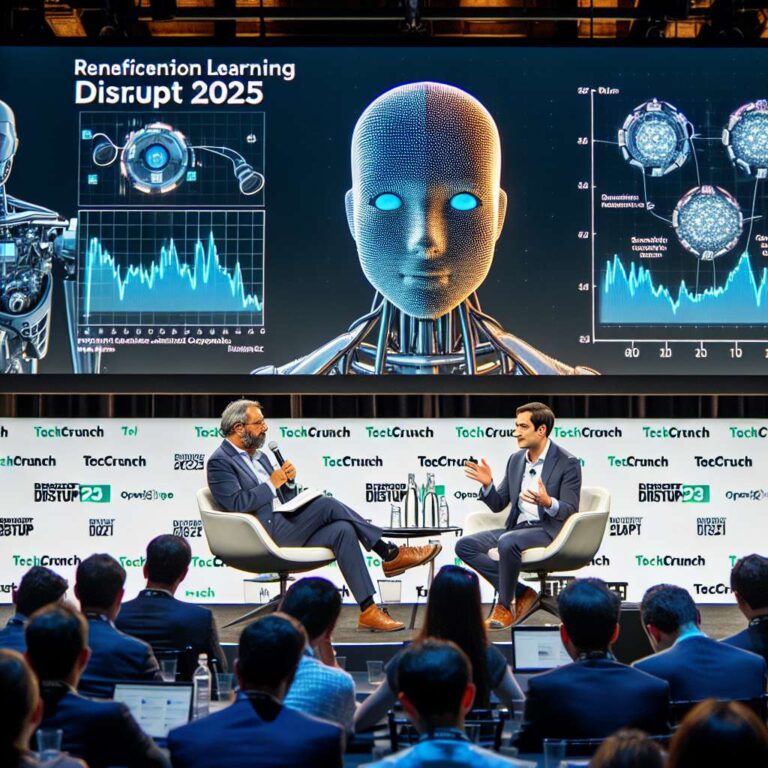

At TechCrunch Disrupt 2025, Eric Anderson of Scale Venture Partners and Kyle Corbit, CEO of OpenPipe, argued that data scarcity is the central constraint on the next wave of Artificial Intelligence breakthroughs. Historical datasets such as ImageNet and the accumulated public web that powered early large language models are finite. Speakers pointed to the need for a renewable, ownable data source and positioned reinforcement learning as a mechanism to create exactly that through continuous interaction and feedback.

The talk used Google as a useful analogy: the first algorithm scraped the open web and produced a commodity dataset, while a second, interaction-driven algorithm captured proprietary user behavior and produced a durable advantage. Reinforcement learning has already been applied in limited forms, notably OpenAI’s use of human feedback in 2022 with ChatGPT 3.5, and more recently in models tuned for reasoning. The next step is agents that learn by interacting inside defined environments such as spreadsheets, CRMs, or websites. Those purpose-built agents can outperform general models within their containers, though their learned behaviors do not necessarily generalize across domains.

OpenPipe’s demonstrations highlighted practical tradeoffs: smaller models like QWEN 14B can be far cheaper and much faster, and when allowed to explore and reinforce their own behavior they can surpass larger models in task performance. That dynamic suggests a market shift: post-training, which injects experience-driven updates, may grow to subsume parts of inference and evaluation infrastructure. As models cycle between sampling and continued training, vendors that provide post-training and agent tooling could capture upstream value and bundle inference, while GPT wrappers and niche agent builders may regain defensibility by creating proprietary interaction datasets. The speakers concluded that reinforcement learning will reshape where investment and product opportunity sit across the Artificial Intelligence infra stack.