Artificial Intelligence tools, including generative Artificial Intelligence, machine learning, and large language models, are becoming more common across financial services, creating new compliance and operational questions for firms that buy, deploy, or oversee these systems. Securities regulators in the US have not yet built a dedicated rulebook for most Artificial Intelligence uses, and instead continue to emphasize that existing regulations are technology neutral and still apply. That posture mirrors the way regulators previously handled electronic trading, cloud computing, robo-advisers, alternative trading platforms, and electronic communications with customers.

In 2023, the SEC proposed a new rule addressing Artificial Intelligence-induced conflicts of interest. This proposal has since been withdrawn, receiving mixed reviews by several SEC commissioners and industry commentary. Even without new securities-specific rules, regulators are signaling that supervision of Artificial Intelligence is intensifying. In March 2025 in SEC vs. Rimar Capital USA, the SEC claimed the respondents raised funds via false promises about the firm’s use of Artificial Intelligence for automated trading. In 2024, one FINRA action specifically mentioned Artificial Intelligence, involving a broker-dealer’s implementation of a flawed machine learning program designed to assist in their compliance with AML requirements. In its 2026 annual report, FINRA emphasized a focus on Artificial Intelligence testing and monitoring.

State governments are moving faster than securities regulators on targeted lawmaking. California, Texas and Colorado have passed comprehensive Artificial Intelligence legislation, while other states have proposed or adopted narrower measures focused on consumer privacy, deceptive media, fair use of protected works, and disclosure requirements when consumers interact with Artificial Intelligence. Those rules are not tailored specifically to financial services, but they can still affect firms operating across multiple jurisdictions, particularly where consumer rights and data handling are involved.

Past regulatory experience suggests firms should expect practical standards to emerge through examinations and enforcement rather than through immediate new rulemaking. Regulators historically used that path when firms shifted from postal mail to email and other electronic communications. In the early 2000s, regulatory actions against multiple firms clarified expectations around supervision, recordkeeping, and preservation of electronic communications after firms used inconsistent and risky storage methods. The same pattern is likely to shape Artificial Intelligence oversight, especially in areas where marketing claims, supervisory gaps, data use, and human review create risk.

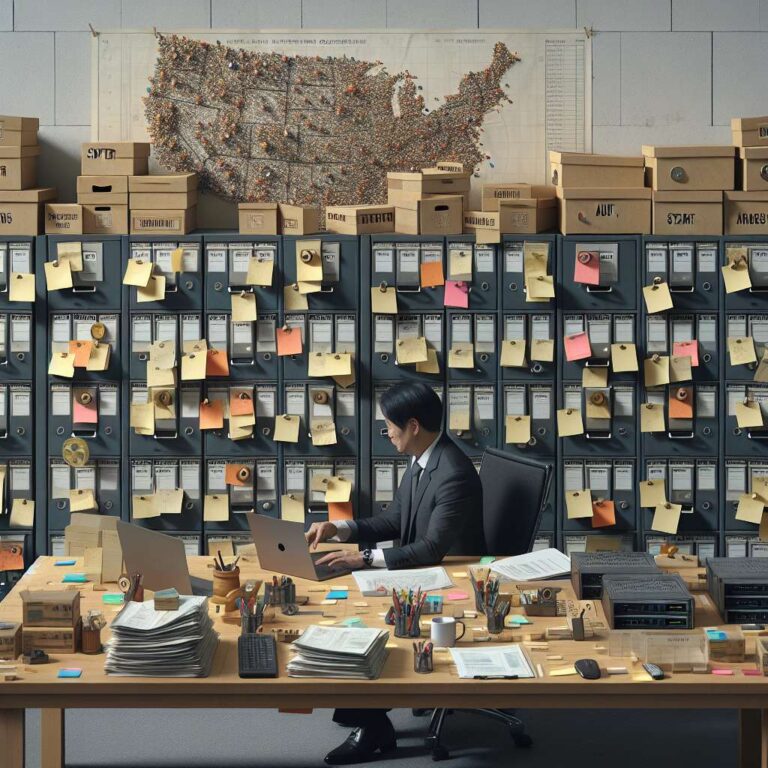

Firms are being pushed toward a more proactive compliance model. Recommended steps include defining what counts as Artificial Intelligence inside the organization, setting clear rules on where employees can and cannot use such tools, training staff on authorized use and escalation procedures, restricting access to disallowed systems, documenting ongoing risk assessments, and testing for unsupervised or unapproved activity. Oversight of third-party vendors, data access, confidentiality, and cross-border legal obligations is also becoming central. Over time, firms may face pressure not only to control Artificial Intelligence risk but also to show that failing to adopt effective Artificial Intelligence tools does not leave them less capable of meeting customer expectations or regulatory demands.