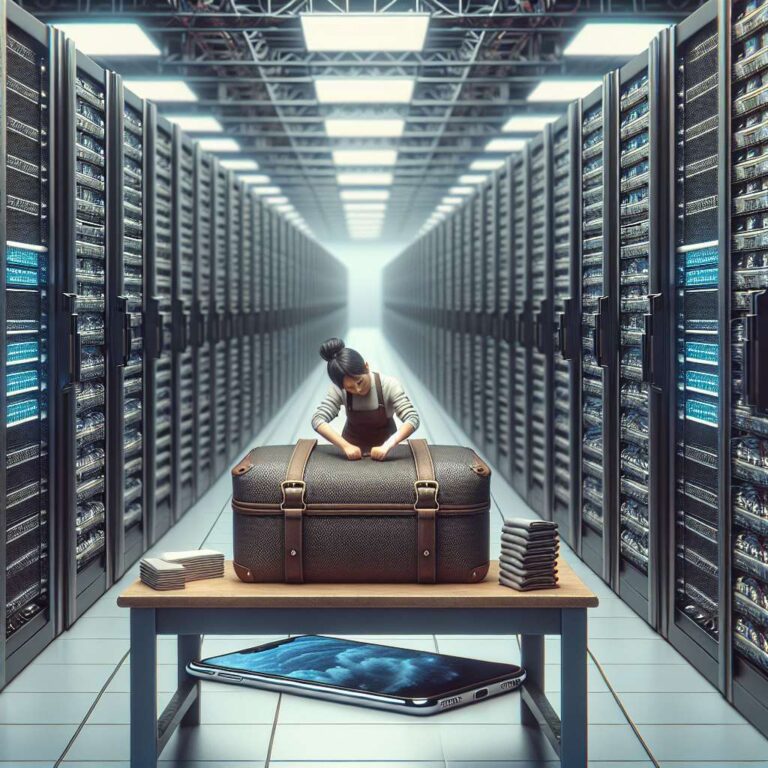

Google has introduced TurboQuant, a compression algorithm described in a Google Research paper that aims to make large language models far more efficient. The core claim is that the TurboQuant algorithm can make LLMs’ memory usage six times smaller. That reduction could translate into lower energy use in data centers, lower RAM demands, and the possibility of running more capable Artificial Intelligence models on devices such as smartphones.

The development fits a broader shift toward smaller, more efficient Artificial Intelligence systems rather than simply scaling up infrastructure. DeepSeek in 2025 showed that a leaner model could use far less data center energy while still performing well on benchmark tests against larger U.S. models. TurboQuant is presented as another example of that trend, with the potential to help operators make better use of existing data centers instead of accelerating construction of new ones.

The pressure to improve efficiency comes as the expected expansion of Artificial Intelligence infrastructure faces practical constraints. NVIDIA has benefited from expectations of massive data center growth, driven by what CEO Jensen Huang called this month “the largest infrastructure buildout in history.” But building projects are running into opposition from communities, permit and inspection delays, and shortages in power generation and transmission. In that environment, making models do more with less becomes increasingly valuable.

TurboQuant focuses on two memory bottlenecks in model operation: the key-value cache, which stores frequently used information, and vector search, which matches similar items. Google says TurboQuant helps unclog key-value cache bottlenecks by reducing the size of key-value pairs, partly through the “clever” move of “randomly rotating the data vectors.” The result is framed as faster, lighter, and easier-to-run Artificial Intelligence, using the same basic logic that made earlier compression advances important for file downloads and video streaming.

The broader implication is that gains in model efficiency could reshape the economics of Artificial Intelligence computing. A more powerful LLM could run entirely on a phone, while data center operators could fit more capability into existing hardware. That creates a tension for an industry built around ever-larger infrastructure expansion, even as it opens the door to more practical and less resource-intensive deployment.