In recent developments, researchers have been focusing on the interpretability of Large Language Models (LLMs). This significant advancement aims to unravel the inner workings of these complex models, allowing experts to better understand how they process information and generate responses. By employing new methodologies such as circuit tracing, researchers attempt to map the computation paths that LLMs use to arrive at their outputs, which could potentially enhance transparency and trust in these technologies.

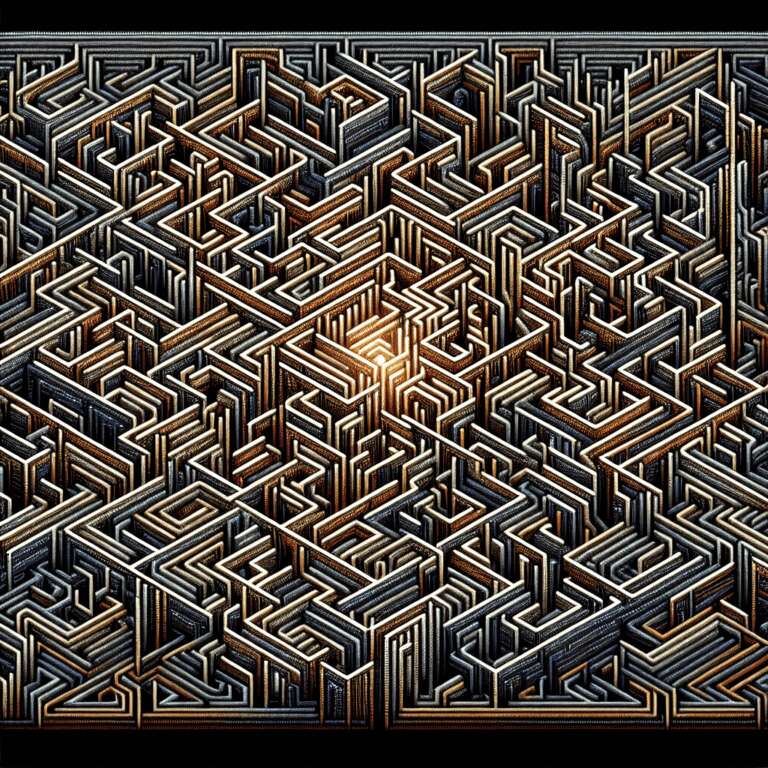

The research is largely centered on identifying and mapping the circuits within these models. Circuit tracing is one of the innovative methods developed to better understand the operational mechanics of LLMs. This approach provides insights into the decision-making pathways employed by Artificial Intelligence models, uncovering how data inputs are processed and how various model components interact.

Moreover, advancements in this field are not limited to understanding existing models but also have implications for future Artificial Intelligence development. Better interpretability can lead to the creation of more efficient and reliable LLMs. Such improvements could lead to broader applications and a deeper integration of Artificial Intelligence across various industries, enhancing functionalities while keeping ethical considerations in check.