Artificial Intelligence is widely promoted for its potential to improve productivity, accelerate discovery and deliver benefits across society and academia, but senior research leaders at UCL are warning that its rapid adoption may carry hidden risks for how science progresses. Geraint Rees, UCL vice provost for research, innovation and global engagement, highlights that while computational tools and data driven methods can enhance existing research practices, they can also channel attention and resources into a narrower set of problems and approaches.

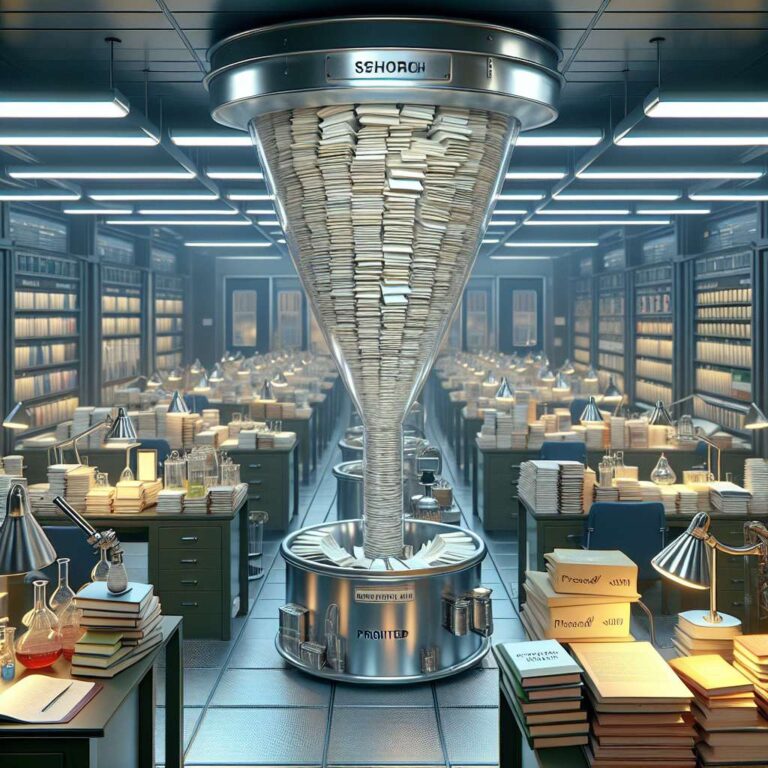

The concern is that the growing influence of Artificial Intelligence systems on funding decisions, hiring, publication and evaluation may reinforce existing trends and biases rather than support a diverse scientific ecosystem. When algorithms are trained on past data and reward familiar topics, methods and institutions, they can make it harder for unconventional ideas or minority research areas to gain support. Rees argues that this narrowing effect is particularly problematic in basic science, where many transformative breakthroughs emerge from unexpected directions, long shot projects and curiosity driven work that may not look promising to pattern matching systems trained on historical success.

In this view, embracing Artificial Intelligence for its clear efficiencies must be balanced with deliberate safeguards for pluralism in research agendas and academic culture. Silence about the downsides of over relying on automated assessment and optimization is seen as risky, because it leaves structural shifts in science unexamined until damage to the pipeline of novel ideas has already occurred. Rees calls for more open debate among researchers, universities, funders and policymakers about how to use Artificial Intelligence in ways that support, rather than limit, the range of scientific questions pursued and the independence of human judgment in shaping the future of discovery.