Artificial Intelligence video generators in 2026 are moving firmly into professional creator workflows, supporting tasks like storyboarding, ad concepts, social clips, product explainers, and early previsualization. The core challenges remain prompt adherence, shot consistency, motion quality, audio-video alignment, reference control, and iteration speed under deadline pressure. Creators increasingly prioritize tools that behave predictably rather than those that simply win benchmarks or produce the most surprising first outputs.

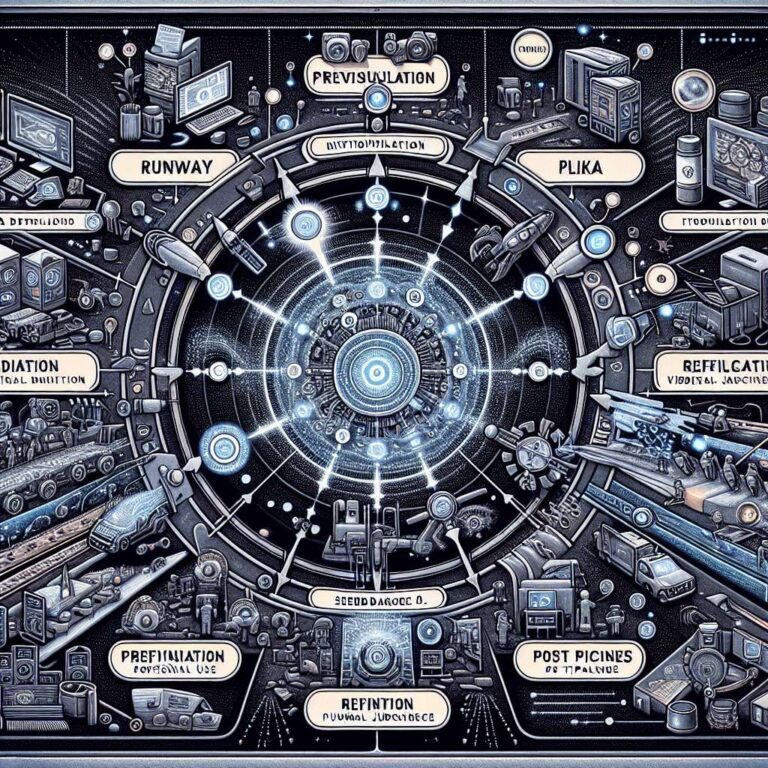

Runway, Kling, and Pika occupy distinct niches in this landscape. Runway is positioned as a polished, widely adopted option that fits teams using multiple tools and seeking a mature workflow, although some users still see variability when locking precise scene logic. Kling attracts attention for cinematic motion and dramatic, high-impact visuals, which makes it compelling for fast, high-energy scenes, but reliability can shift with prompt complexity and may demand multiple iterations. Pika tends to serve social-first creators who value speed, remixability, and quick short-form experiments, offering an accessible iteration style that may still require extra planning for tightly controlled multi-shot narratives. Across these tools, many teams now care more about control and repeatability than purely novel generations.

Seedance 2.0 is drawing serious interest as a new-generation model centered on a unified multimodal, reference-first workflow. Instead of relying only on text, it allows mixed inputs across text, image, video, and audio, so creators can guide outputs more like directors using concrete references for camera rhythm, costume energy, and sound mood. It is frequently noted for stronger stability in complex motion with multiple subjects, layered movement, and perspective changes, and its joint audio-video approach directly targets timing coherence rather than perfect soundtrack creation. Short clips remain standard, but a 15-second high-quality target is framed as a practical window for mini-narratives, ad hooks, and storyboard validation. Even so, no current model replaces editorial judgment, shot planning, or human taste, and regional availability plus usage limits still need verification before client commitments. Productive teams increasingly build a stack across ideation, previsualization, refinement, and post, with Seedance 2.0 fitting especially well into continuity and motion-critical stages. It suits solo cinematic creators, marketers, small studios, and content teams that need multiple on-brand versions quickly, and is recommended as a tool that aligns with how creators already work: using references, iterating, directing, and refining rather than relying on single, lucky prompts.