Asus is introducing the xa nr1i e12l server series built around the nvidia hgx rubin 8 gpu platform, targeting next generation artificial intelligence infrastructure where efficiency, density, and reliability are central requirements. The systems are designed for massive reasoning and generative artificial intelligence workloads and are offered with liquid or hybrid cooling to support high volume deployment, consistent performance, and lower total cost of ownership. The platform focuses on enabling enterprises to scale from pilot projects to production level artificial intelligence with an emphasis on thermal performance and serviceability.

The xa nr1i e12l configuration uses a hybrid cooling approach that combines direct to chip liquid cooling for the nvidia hgx rubin 8 gpu baseboard with air cooling for dual intel xeon 6 processors, positioned as an easier transition path for data centers adopting liquid cooling. The xa nr1i e12lr variant is a 100% liquid cooled design aimed at maximum thermal efficiency and rack density for the most demanding artificial intelligence workloads. The nvidia hgx rubin nvl8 baseboard is tuned for large scale model training and inference, with specifications that highlight “2.3kW” extreme gpu power and “800G” ultra fast gpu bandwidth, while a modular gpu sled is promoted as being built for serviceability.

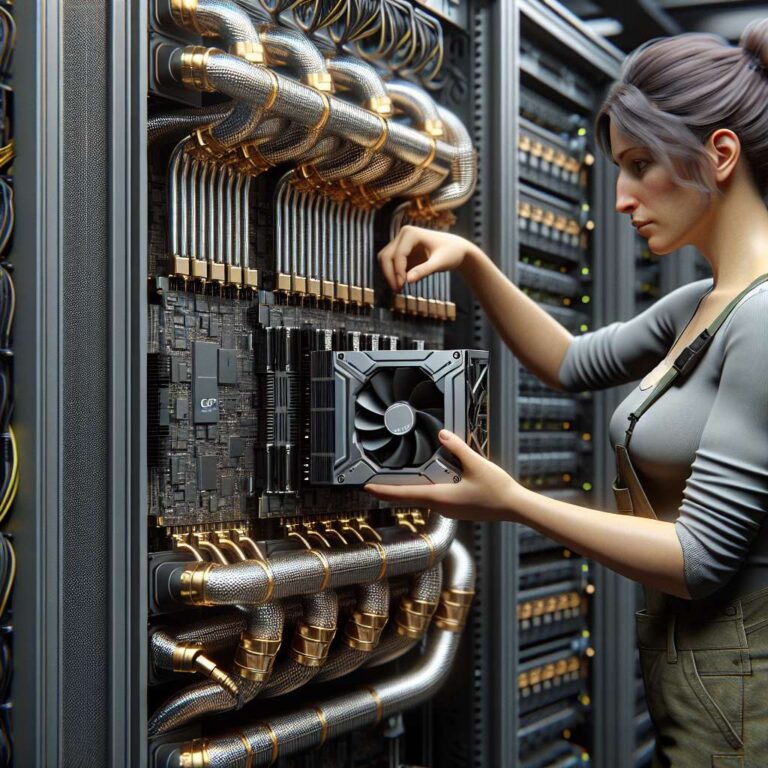

The servers use a modular architecture where components such as the cpu sled and nvidia rubin gpu sled are accessible without tools, which is described as minimizing downtime, accelerating maintenance and upgrades, and reducing operational costs. At rack scale, the asus artificial intelligence factory reference architecture provides a pre validated configuration that includes compute trays, file system and object storage nodes, management nodes, and networking based on nvidia switches, along with “62x 3RU Power Shelf 110kW”, “82 x 3U Power Shelves 110k”, and “102 x 3U Power Shelves 110k” entries in the layout. A representative rack includes “9 x ASUS XANR1I-E12LR” populated with “18 x Intel® Xeon® 6 processors” and “72 x NVIDIA Rubin GPUs”, integrated with nvidia bluefield 3 dpu, nvidia connectx 8 networking, and “12x 800GbE OSFP Ports” for high bandwidth fabric. Performance related callouts reference “1.5X” artificial intelligence performance and “1.5X” high bandwidth memory improvements with nvidia blackwell gpu and nvidia gb200 superchip, “800Gb/s” bandwidth with nvidia connectx 8 supernic, and “2X” attention layer acceleration from nvidia blackwell ultra tensor cores, with projected results described as subject to change.

The design is framed as enabling low latency large language model inference, citing “TTL = 50 milliseconds (ms) real time, FTL = 5s, 32,768 input/1,024 output” scenarios when comparing legacy nvidia hgx h100 clusters over infiniband with gb300 nvl72 configurations at cluster sizes of “32,768”. Additional examples reference a database join and aggregation workload derived from tpc h q4 query using snappy and deflate compression, comparing x86 processors, nvidia h100, and a single gpu from gb300 nlv72 against intel xeon “8480+”, while noting that the performance is projected. Across system, rack, and facility scale, Asus positions these hgx rubin based platforms and cooling solutions as foundational building blocks that allow customers to deploy trusted, powerful, and scalable artificial intelligence infrastructure from single servers up to complete artificial intelligence supercomputers.