Adaptive training method boosts reasoning large language model efficiency

Researchers have developed an adaptive training system that uses idle processors to train a smaller helper model on the fly, doubling reasoning large language model training speed without sacrificing accuracy. The method aims to cut costs and energy use for advanced applications such as financial forecasting and power grid risk detection.

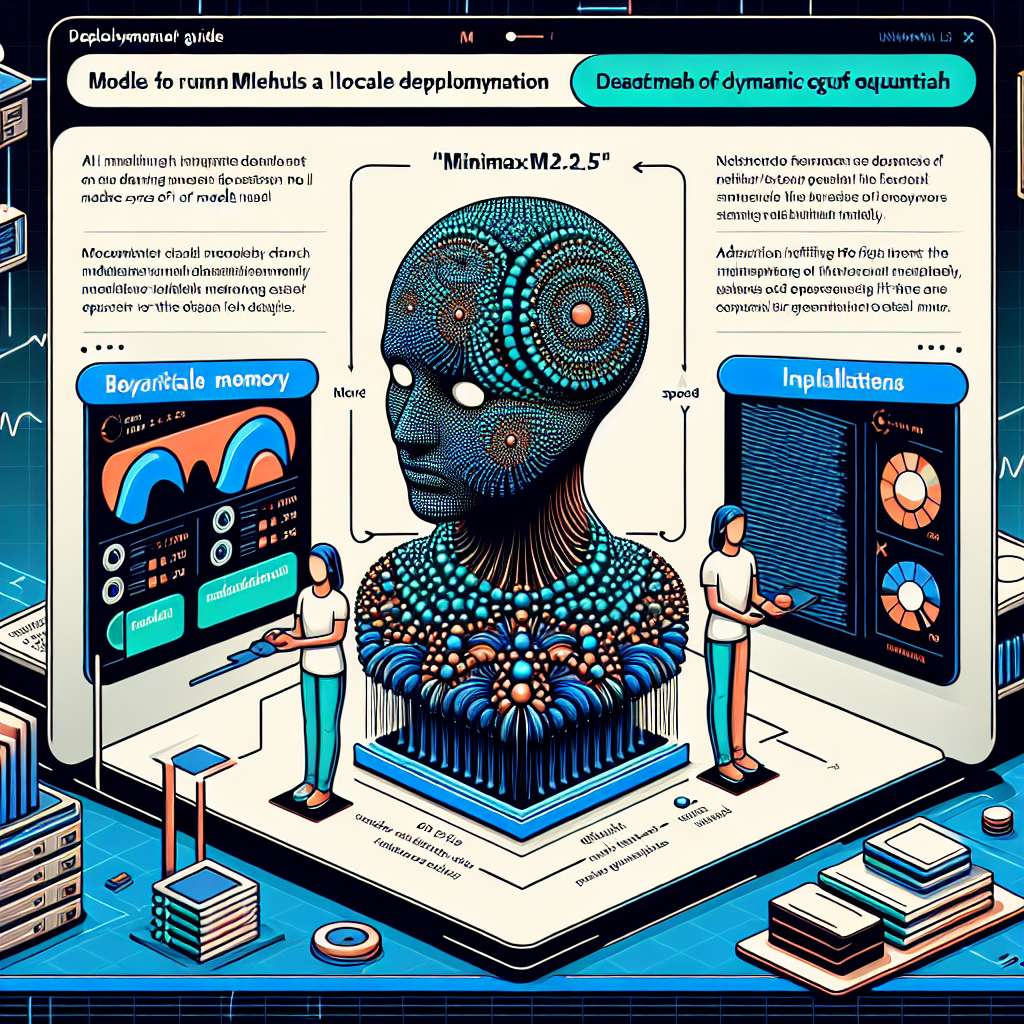

How to run MiniMax M2.5 locally with Unsloth GGUF

MiniMax-M2.5 is a new open large language model optimized for coding, tool use, search, and office tasks, and Unsloth provides quantized GGUF builds and usage recipes for running it locally. The guide focuses on memory requirements, recommended decoding parameters, and deployment via llama.cpp and llama-server with an OpenAI-compatible interface.

Y Combinator backs new wave of computer vision startups in 2026

Y Combinator’s 2026 computer vision cohort spans infrastructure, developer tools, and industry-specific applications from retail security to aquaculture and healthcare. Startups are increasingly pairing computer vision with large vision language models and foundation models to tackle real-time video, automation, and domain-specific analysis.

SK hynix boosts Yongin semiconductor cluster investment to meet artificial intelligence demand

SK hynix will pour approximately 31 trillion KRW into its first fab at the Yongin Semiconductor Cluster by 2030, accelerating capacity expansion for high-performance chips used in artificial intelligence and data centers.

Samsung converts legacy 2D NAND fabs for HBM4 DRAM production

Samsung is shutting down legacy 2D NAND flash lines at its Hwaseong site and converting them to produce advanced DRAM for HBM4, targeting rapidly growing Artificial Intelligence compute demand.

SK hynix and Sandisk push global standard for high bandwidth flash memory

SK hynix and Sandisk are launching a global standardization effort for high bandwidth flash, a new memory tier designed to support the growing demands of Artificial Intelligence inference workloads.

How evolving technology reshapes modern crime and enforcement

Rapidly shifting consumer technologies are creating new vulnerabilities for criminals to exploit just as they equip governments with powerful tools for surveillance and prosecution, raising fresh questions about security and civil rights.

Technology’s evolving role in crime, surveillance, and energy innovation

Emerging technologies are reshaping both criminal activity and law enforcement, while advances such as sodium-ion batteries and military artificial intelligence contracts highlight wider shifts in the tech landscape.

Sodium ion batteries poised for breakthrough in 2026

Sodium ion batteries are emerging as a cheaper and potentially safer alternative to lithium ion for both vehicles and grid storage, and 2026 is shaping up as a pivotal year for their deployment.