Most people get excited about AI assistants until they ask one a specific question about their own business – and watch it confidently make something up. Out-of-the-box language models aren’t built to know your internal data. They generate plausible text, but ask about your warranty policy, your own product specs, or even last week’s support issue and you’ll get the same mix of generalities and polished fiction. Entertaining, but useless for real work.

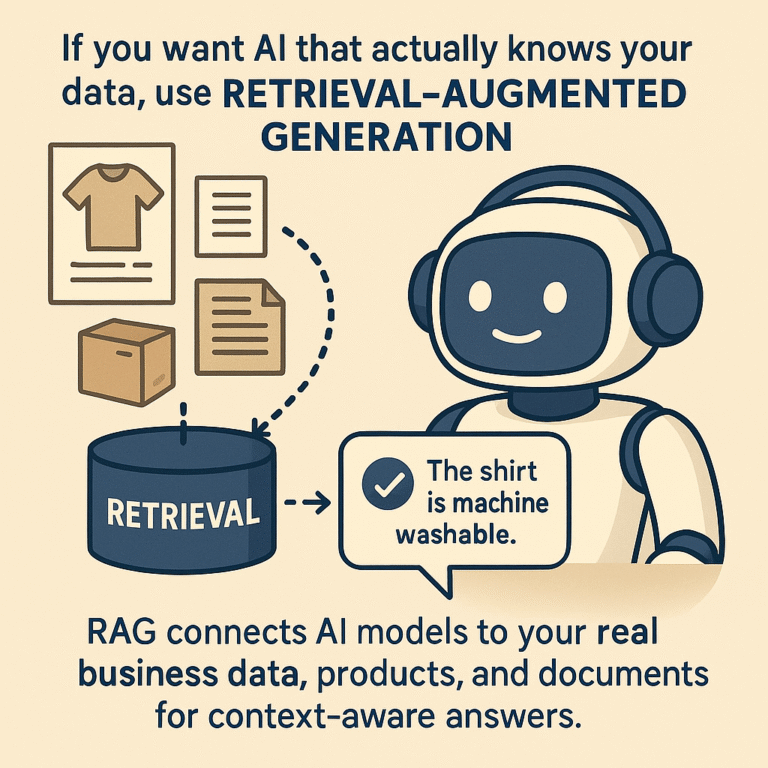

RAG is how you bolt a model onto your actual business knowledge.

Instead of letting the LLM improvise, RAG retrieves the right content – product sheets, documentation, internal memos, contracts, whatever – from your actual data sources, then feeds it to the model as context for its answer. It’s the difference between an AI that “knows of” your company and one that can answer like it actually works there.

How RAG actually works

Forget the marketing slides; here’s what really happens:

You start by collecting the raw materials: PDFs, CRM exports, product descriptions, old wiki dumps, the lot. This isn’t a “dump it in and pray” scenario; you’ll need to clean, normalize, and break everything into meaningful, context-preserving chunks (paragraphs, sections, or well-labeled snippets – not random 500-token cuts).

Each chunk gets run through an embedding model (OpenAI, Cohere, SentenceTransformers, etc.) which turns it into a vector – a numeric representation of meaning. These vectors get stored in a vector database. Could be Pinecone, Qdrant, Weaviate, or, if you want to keep it in-house, MariaDB 11.8 with native vector support. The choice depends on scale, performance, and how allergic you are to SaaS lock-in.

When a user query comes in – say, “Does the XL9000 support dark mode?” or “Where’s the latest EU compliance certificate for product Y?” – the system runs the question through the same embedding model, searches for similar vectors, and grabs the top matching chunks. Those are bundled up and sent, along with the question, to the language model (GPT, Claude, Mistral, etc.) which generates an answer based on your data.

You get answers with real citations, context, and, if you’ve done the upstream work, accuracy that a generic LLM can’t touch.

Why ecommerce is a perfect testbed for RAG

The difference between “AI assistant” and “guessing machine” is obvious in ecommerce. Customers ask specific, high-variance questions – “Is this shirt machine washable?” “What’s the shipping time for France?” “Do you have this jacket in XXL, blue?” – and if you’re relying on a generic LLM, you’ll get everything from hallucinated features to invented return policies.

With RAG, you can plug in your product catalog, care instructions, logistics data, and support archive. The system fetches real answers: not just “maybe,” but “yes, the cotton shirt (SKU 34412) is machine washable at 30°C; see care label for details.” For search, recommendation, support, or even compliance queries, you can finally trust the answer because it’s grounded in what’s actually true for your business.

How to build RAG that works (and how most fail)

It’s not magic, but there’s a right way and a wrong way.

- Clean, structured source data:

RAG is not an exorcist. If your inputs are garbage, the output will be too. Invest in sensible chunking, metadata tagging, and keep your sources up to date. - The embedding model:

Use a model that actually reflects your domain. Sometimes generic is fine, but if your docs are technical or multilingual, tune accordingly. - Database matters:

For POCs, Pinecone or Qdrant are easy, but MariaDB 11.8 lets you bring vectors to your relational data with no extra moving parts. Choose based on latency, scale, and ops burden. - Retriever logic:

Simple similarity search works, but hybrid or re-ranking models can boost relevance. Don’t get fancy until you’ve validated the basics. - The LLM itself:

Don’t cheap out; prompt quality, context window, and even the specific model make a big difference. Remember, the LLM only knows what it’s shown. - Caching and orchestration:

Latency is the silent killer. Smart caching (for both retrieval and LLM answers) is non-negotiable if you care about scale.

Most failures? People try to duct-tape too many services, skip cleaning their data, or assume a generic model will just “get it”. It won’t.

Where RAG works – and where it doesn’t

Done right, RAG transforms support bots, internal search, and customer assistants into genuinely useful tools. It lets you build AI that knows your SKUs, your legal disclaimers, your pricing rules – whatever matters.

But let’s be clear:

If your data is a mess, don’t bother. RAG will simply surface the chaos you already have; no amount of AI will fix poor, incomplete, or inconsistent data. Garbage in, garbage out.

If you need sub-second responses for 10,000 users at once, it becomes an enterprise project. RAG adds overhead: Vector searches, ranking, and LLM inference. Handling this at scale requires distributed databases, heavy caching, and serious infrastructure – hardly plug-and-play for most smaller organizations.

If you expect the AI to “think” deeply across fifty documents with complex, multi-step reasoning, you’re out of luck. Standard RAG surfaces the most relevant pieces and stuffs them into a context window, but it isn’t designed for deep logical chaining or complex synthesis across documents. That’s still very much a research and custom AI training challenge, not quite an off-the-shelf feature.

Bottom line: RAG does context, not miracles. Anyone selling you otherwise is overpromising.

RAG isn’t just for ecommerce or massive enterprises. Small and mid-sized businesses, consultants, or anyone sitting on a pile of documents can see real value. Imagine a law firm searching across all client files and case histories in seconds, or a consultancy turning years of reports and project notes into an instantly accessible knowledgebase. Even a modest internal archive becomes far more powerful when you can query it in plain language and get context-aware answers.

Any SME with specialized documentation, sales records, or support tickets can build a focused, private AI knowledgebase that makes their data instantly actionable.

Final word

RAG isn’t the next AI revolution, but it’s what actually makes language models useful for business. Clean data, a sensible architecture, and a focus on your actual use case are what separate “chatbot demo” from “real business value.” Do the work up front and you’ll have an assistant that finally knows what it’s talking about.